I love the smell of metrics in the morning.

I spent years in a metrics-driven organisation with analysis deeply rooted in the company’s DNA... and it was just great. My user experience design team was constantly occupied with lots of small tasks focused on the optimisation of the UI and though this is not something you can brag about during a family dinner (hardly noticeable things aren’t particularly admired), it let our users perform better every day and damn... it was bringing the company money! We did our job well.

Despite all this experience, I struggled to put up an analytical framework for UXPin - my own startup. By not measuring things properly, We were pushing our company into the void of lost opportunities, money and users’ trust. It was a nightmare for me both as an entrepreneur and a user experience designer.

I started to wonder: if we’re creating The UX Design App, shouldn’t we be an example of a great approach to user experience design? Why do we keep failing? What’s the difference between our startup and my previous job? The answer was simple: the difference is fundamental. Startups are just different.

It’s not the difference in team size and revenue numbers, it’s the difference of dynamics, uncertainty and your personal feelings.

The latter is probably the most influential. In your own startup some things are unnaturally hard, because you care too much. Caring too much brings chaos on board. Chaos makes the simple complicated. Next thing you know: you’re in trouble.

Besides, in a startup you’re so busy building that you sometimes forget about thinking. Ain’t that shamefully right, fellow entrepreneurs? Who doesn’t get caught by this vicious trap from time to time? I know I do.

Get the Creative Bloq Newsletter

Daily design news, reviews, how-tos and more, as picked by the editors.

UXPin came a long way – from a fresh product with problems to current solid traction and amazing growth in sales. I’d like to share with you how we used specific metrics to stay focused and really accelerate our business. Hopefully, it will spare you a couple of sleepless nights and give your project a proper push.

Crossroads of art and science

User experience design lies at the crossroads of art and science. It’s a magical mixture of visual art, hard-boiled psychology and numbers. Drink it, click your heels and you’ll soon be in the right place, Dorothy.

User experience design is powerful, but honestly, there’s not a lot of mystery here. On a very general level, successful UX designers do just three things:

- Measure human behaviour and act upon metrics;

- Come up with solutions to well understood problems, basing their ideas on psychological knowledge and data gathered in research (solutions are visualised as prototypes, wireframes, sketches, diagrams etc.);

- Communicate with other members of the team to facilitate design collaboration.

Do the same and your startup will flourish and grow rapidly. Sounds simple right? Unfortunately, sometimes the road is unpleasantly rough. Measuring human behavior in a startup is hard to do and easy to forget.

I’ve recently had a conversation (not the first one of its sort) on why the results of the work of a very talented designer don’t bring home the bacon (happy users and money). The design looks great, most of the decisions are backed up with reasonable argumentation, it’s shiny, personal and seems to be clever. What could be wrong? Why doesn’t it simply fly?

It’s very easy to lose faith in the designer’s talent, the users, or, God forbid, the design itself. Too easy. We have this inner urge to blame, but believe me - that’s not the right path to take. This shiny design might have a certain value, it just doesn’t perform well enough. Blaming the designer would only obscure the picture. Perhaps we’re just one small tweak away from a great-looking, high-performance interface. How could we know this, if not by carefully measuring performance, gathering the right data and drawing a valid conclusion?

Make sure that you know what your design is supposed to do (choose one main thing to start with), choose one metric that can tell you if people succeed and measure it. The numbers don’t look too good? Try to figure out what’s going wrong (classic usability testing might come in handy) and correct it. It’s almost always that easy.

Measurement is a habit that you need to grow and in time you’ll get better and better at choosing the right things and ways to measure them. Your startup will flourish.

In our story, the particularly talented designer, didn’t measure and didn’t optimise his designs. No wonder there was no bacon at the table. He remained unsuccessful because he forgot about one ingredient of our magical mixture of user experience design - the numbers - a measurement of user behaviour. That’s the easiest way to fail.

You don’t want to copy his approach. Especially when your business is at stake.

To measure or not to measure?

Big players measure a lot. Every step a user takes, every tiny business occurrence, cash flow... no doubt they gather powerful data and it costs them a lot. Dozens of analysts are using every working hour to measure everything that’s measurable.

I assume, as an entrepreneur, you can’t afford an army of analysts. I’m pretty sure you have a lot of things worth measuring, but not nearly enough people and time to measure them.

Don’t worry. That’s not the problem.

Measuring too many things is paralysing for just about any company and it’s a death walk for a startup. You measure to validate decisions and decrease the risk of failure. The minimal amount of information necessary for a certain decision is good enough. Over-thinking decisions doesn’t further decrease the risk of action, as Daniel Kahneman pointed out in his recent book. Just listen to your data and make a decision. Do it.

Testing adds more value to your company than over-thinking. It might sound ridiculous, but only testing lets you operate on real data, not on a set of assumptions. The lean startup methodology draws a lot from this approach, which is pretty common in the world of science. For example, psychology relies heavily on experimental methods to test theories about human behaviour.

Measuring only the right things is one of the competitive advantages that you can have over bigger players. They have way too much money and too many resources to stay focused! When it comes to analysis, being small actually helps! You can’t allow any waste, because it may put you out of the business. When big players dive into data, postponing the decision for months, you can test a couple of assumptions based on your small, but accurate, set of metrics. Isn’t that just great?

Agility is your greatest power. Use it wisely and may the force be with you.

All right, but how do you decide what to measure? There are two sets of metrics that you need to take into account: economic metrics and behavioral metrics.

Economic metrics

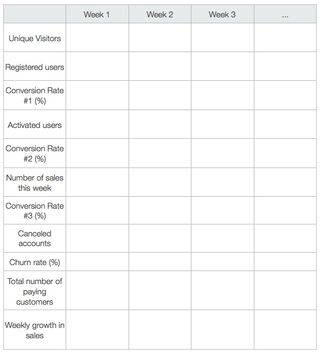

They must clearly show the state of your business. The choice of this set of metrics strongly depends on the stage of your company. Take a look at following table:

Right now UXPin focuses strongly on the number of paying customers, as we’re vastly interested in tracking our progress in encouraging users to join us. The number of people making the decision to use UXPin and overcoming the obstacle of reaching for the credit card is currently more important for us than monthly revenue. The number of paying customers lets us know if our target group responds to UXPin products in a positive way. Luckily it does!

We’re getting to the point at which the whole business model will become scalable and we’ll have enough data confirming that we’re on the right track. It will be the time of LTV (user’s Life-Time Value) and ARPU (Average Revenue Per User) optimisation, which should elevate our business to the next level.

Behavioral metrics

Behavioural metrics are meant to track very specific actions of your users. Whenever you’re about to launch a new feature or product, consider:

- What’s the main use case? (It should be derived from your C-P-A hypothesis)

- How do you measure whether users are able to succeed in the main use case?

Your goal is to gather data that will let you assess the new feature or product's performance. For example, if it’s a new sign-up form, track:

- The number of successful sign-ups

- The conversion rate

- The number and type of errors

The number and type of behavioral metrics depends on the project and the hypothesis that you formed during the design process. Remember - less is more. You just need metrics to validate your design hypothesis - don’t track everything or you’ll be lost in data.

When you’re analysing behavioural metrics, you must always take into account economic metrics as well. Most of the features, and certainly all of the products, must add value to the company and you need to make sure they do. This is why you actually track economic metrics, right?

If, after the launch of a certain feature, sales suddenly drop, you’ll need data to check what happened. That’s why it’s particularly important to precede any launch with the implementation of appropriate analytical tools.

Mirror mirror on the wall...

We all love to brag sometimes, right? OK... at least most of us do. Numbers are one of the greatest bragging tools. Their meaning always depends on the context and they’re just so easy to manipulate. If a SaaS application brags about 4 million pageviews, but they don’t have any paying customers, would you call them successful?

I wouldn’t.

The number of pageviews is a typical vanity metric for almost all SaaS applications and many other web startups. What’s a vanity metric? As Brad Smith nicely put it:

“Vanity metrics are things people love to quote and obsess over, even though they're almost entirely useless to your business.”

Vanity metrics make the naive among us feel good, but at the same time they push the whole business into an endless depression of idleness. Vanity metrics are absolutely unactionable and therefore useless. They’re a waste of time that can destroy your startup.

To give you a couple more examples: time on site is a vanity metric, so is the average number of pageviews per user, or the percentage of new visitors.

Some vanity metrics are more tricky. In UXPin the “number of projects with comments” was one of them. It seems to be a reasonable behavioural metric that was supposed to let us check the engagement of users in commenting on a feature. Well... it didn’t. The number itself didn’t tell us anything. Some users don’t have people to share a project with, some rather like to export a PDF and attach it to a project management tool, etc. This metric couldn’t tell us about those cases and overall it just failed to provide us with the appropriate knowledge to make any decisions. We killed it to stay focused on what’s really important.

That’s my recommendation to you: keep up with the important metrics and kill the vanity ones. Less is more.

Do it over and over again!

After several weeks of madness in UXPin we managed to get off our knees and start to properly measure the right metrics. That was a relief! Finally, I didn’t feel completely stupid and we started to learn from our users. Great! We used Google Analytics and everything that was important was right there; we could see all the metrics with our own eyes.

Did it cause the necessary change? Nope.

Nobody seemed to care about our shiny, super sexy metrics, apart from two UX designers (including me), who cared a little, but not nearly enough. Our approach wasn’t actionable. Metrics were separated from product development cycles, which should never happen!

How can you expect people in your company to care about metrics if you don’t let them see the influence they have? Every product development cycle should result in a positive change of metrics.

Then we came up with a ridiculously obvious idea: why not set goals based on metrics and check if we’re on the right track weekly? This single thought set our minds on fire and we started weekly measurement cycles with monthly and quarterly sum-ups.

How could we not have come up with the idea of measurement cycles earlier? I have no idea. When we had them up and running they seemed so obvious. After all - you measure to optimise your business, not measure for the sake of mere measurement, and a weekly control of metrics forces the whole company to focus on business optimisation.

That was the shift that we were looking for.

Suddenly, the whole company started to care about our metrics. Goals helped us focus on really important things. They clearly showed where we are and how our work influences business. Metrics became powerfully actionable. If we started to fall short of our predictions, we could take almost immediate action and correct ourselves based on knowledge gathered weekly.

Here’s the table that we use:

Your table might look different - it depends on your business model and the current stage of your company. We’re a SaaS company with steady growth and decent traction, so this kind of funnel makes sense for us.

Quality comes from conversations

We’ve got the numbers figured out, but there’s another equally important part of analysis that can’t be undervalued if you really aim at designing the best user experience possible. Qualitative testing.

The methodology of science empowers us with a whole range of qualitative research methods (case studies, participant observation, direct observation, unstructured interview, individual in-depth interview, focus groups...), which deserve their own article, or perhaps a whole book. Let’s not focus on each specific research method, but rather the general approach in a startup perspective. After all, again - we’re not aiming at complex knowledge, but actionable results.

Qualitative methods are best for broadening your perspective and filling your mind with fresh (often surprising) ideas, derived from your target group. They might give you completely new feature or product concepts, or point out lots of bugs in existing ones. Either way you’ll get unique knowledge that will let you work on the quality of your product.

At UXPin we believe that the quality of the product comes from conversation. Constant, on-going conversation with users. Only by having a proper dialog can you work outside of the box formed by your product, get compared to your competitors and know the real problems of your target group. It’s a powerful type of research.

The important thing is to be consequent. Just as with economic and behavioral metrics, you want to perform qualitative tests regularly.

Choose a method and implement it in your measuring cycles. At UXPin we do:

- Classic usability testing once a month (thanks to the helpful local UX design community! Cheers guys!)

- Individual in-depth interviews with customers every two weeks.

Each session is always extremely refreshing and has a strong share in our current growth.

Tools, tools, tools

You know how we approached measuring crucial things in UXPin; you know where it has led us. I sincerely hope that this knowledge will help your startup reach a high peak of user experience design.

To help you start, here’s a short list of tools that we find really valuable.

Economic and behavioral metrics

Usability Testing

A/B testing

Good luck!