An introduction to frontend testing

When and how to use different frontend code testing options.

Testing frontend code is still a confusing practice to many developers. But with frontend development becoming more complex and with developers responsible for stability and consistency like never before, frontend testing must be embraced as an equal citizen within your codebase. We break down your different testing options and explain what situations they are best used for.

Frontend testing is a blanket term that covers a variety of automated testing strategies. Some of these, like unit and integration testing, have been an accepted best practice within the backend development community for years. Other strategies are newer, and stem from the changes in what backend and frontend development are used for now.

By the end of this article, you should feel comfortable assessing which testing strategies fit best with your team and codebases. The following code examples will be written using the Jasmine framework, but the rules and processes are similar across most testing frameworks.

01. Unit testing

Unit testing, one of the testing veterans, is at the lowest level of all testing types. Its purpose is to ensure the smallest bits of your code (called units) function independently as expected.

Imagine you have a Lego set for a house. Before you start building, you want to make sure each individual piece is accounted for (five red squares, three yellow rectangles). Unit testing is making sure that individual sets of code – things like input validations and calculations – are working as intended before building the larger feature.

It helps to think about unit tests in tandem with the ‘do one thing well’ mantra. If you have a piece of code with a single responsibility, you likely want to write a unit test for it.

Let’s look at the following code snippet, in which we are writing a unit test for a simple calculator:

Get the Creative Bloq Newsletter

Daily design news, reviews, how-tos and more, as picked by the editors.

describe("Calculator Operations", function () {

it("Should add two numbers", function () {

Calculator.init();

var result = Calculator.addNumbers(7,3);

expect(result).toBe(10);

});

});In our Calculator application, we want to ensure that the calculations always function independently the way that we expect. In the example, we want to make sure that we can always accurately add two numbers together.

The first thing we do is describe the series of tests we’re going to run by using Jasmine’s describe. This creates a test suite – a grouping of tests related to a particular area of the application. For our calculator, we will group each calculation test in its own suite.

Suites are great not only for code organisation, but because they enable you to run suites on their own. If you’re working on a new feature for an application, you don’t want to run every test during active development, as that would be very time consuming. Testing suites individually lets you develop more quickly.

Next, we write our actual tests. Using the it function, we write the feature or piece of functionality we are testing. Our example tests out the addition function, so we will run scenarios that confirm that it’s working correctly.

We then write our test assertion, which is where we test if our code functions as we expect. We initialise our calculator, and run our addNumbers function with the two numbers we wish to add. We store the number as the result, and then assert that this is equal to the number we expect (in our case, 10).

If addNumbers fails to return the correct figures, our test will fail. We would write similar tests for our other calculations – subtraction, multiplication, and so on.

02. Acceptance tests

If unit tests are like checking each Lego piece, acceptance tests are checking if each stage of building can be completed. Just because all the pieces are accounted for doesn’t mean that the instructions are properly executable and will allow you to build the final model.

Acceptance tests go through your running application and ensure designated actions, user inputs and user flows are completable and functioning.

Just because our application’s addNumbers function returns the right number, doesn’t mean the calculator interface will definitely function as expected to give the right result. What if our buttons are disabled, or the calculation result doesn’t get displayed? Acceptance tests help us answer these questions.

describe("Sign Up Failure state", function () {

it("Shouldn't allow signup with invalid information", function () {

var page = visit("/home");

page.fill_in("input[name='email']", "Not An Email");

page.click("button[type=submit]");

page.click("button[type=submit]");

expect(page.find("#signupError").hasClass("hidden")).toBeFalsy();

});

});The structure looks very similar to our unit test: we define a suite with describe, then write our test within the it function, then execute some code and check its outcome.

Rather than testing around specific functions and values, however, here we’re testing to see if a particular workflow (a sign-up flow) behaves as expected when we fill in some bad information. There are more minute actions happening here, such as form validations that may be unit tested, as well as any handling for what shows our error state, demonstrated by an element with the ID signupError.

Acceptance tests are a great way to make sure key experience flows are always working correctly. It’s also easy to add tests around edge cases, and to help your QA teams find them in your application.

When considering what to write acceptance tests for, your user stories are a great place to start. How does your user interact with your website, and what is the expected outcome of that interaction? It’s different to unit testing, which is better matched to something like function requirements, such as the requirements around a validated field.

03. Visual regression testing

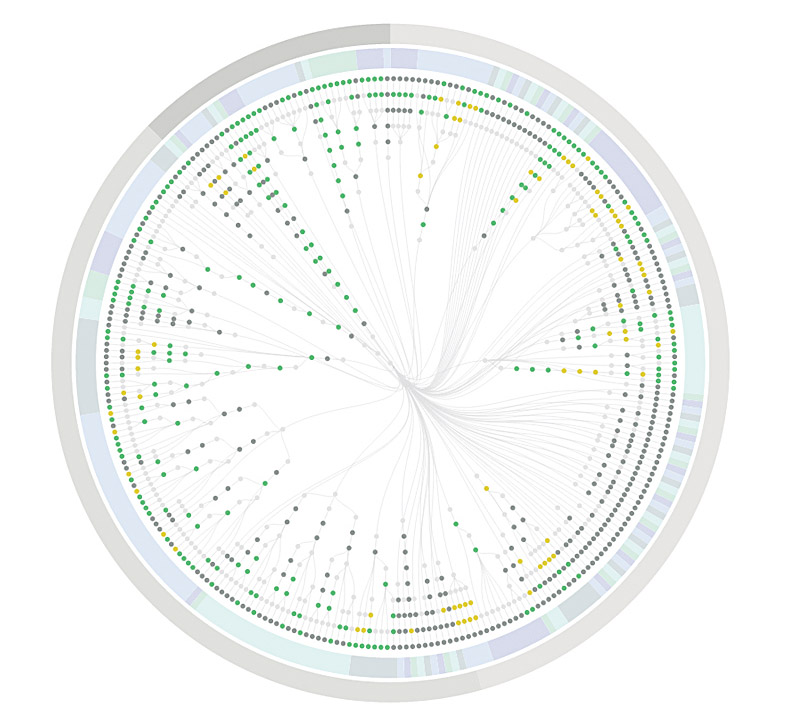

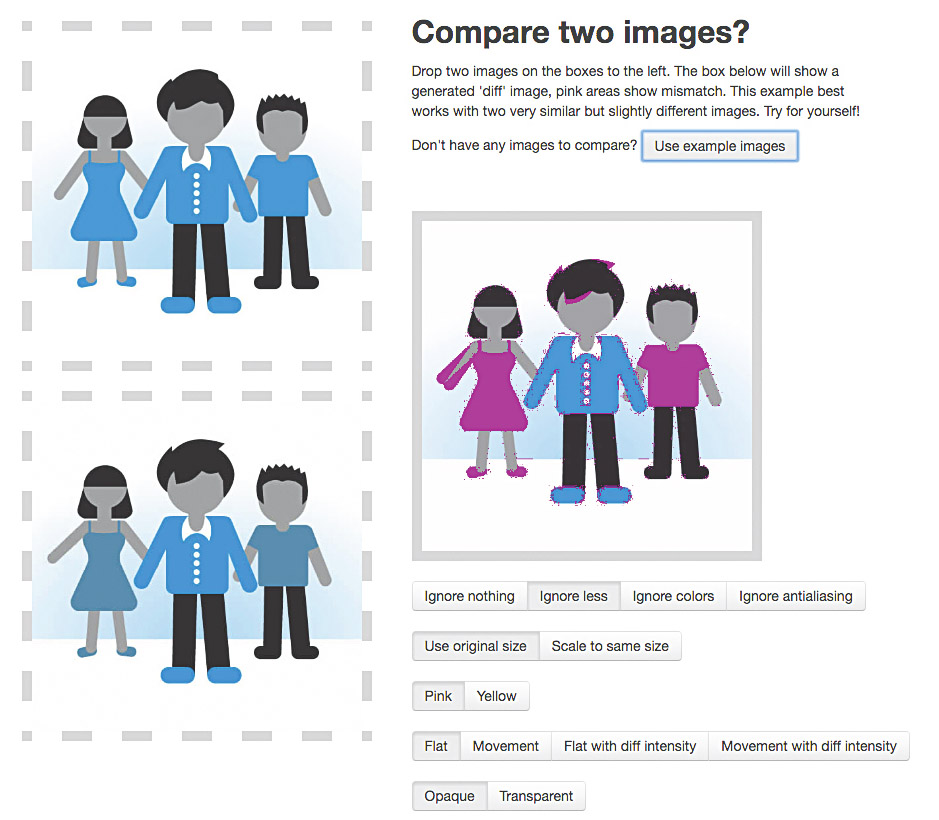

As mentioned in the introduction, some types of testing are unique to the frontend world. The first of these is visual regression testing. This doesn’t test your code, but rather compares the rendered result of your code – your interface – with the rendered version of your application in production, staging, or a pre-changed local environment.

This is typically done by comparing screenshots taken within a headless browser (a browser that runs on the server). Image comparison tools then detect any differences between the two shots.

Using a tool such as PhantomCSS, your tests specify where the test runner should navigate to, take a screenshot, and the framework shows you differences that came up in those views.

casper.start("/home").then(function(){

// Initial state of form

phantomcss.screenshot("#signUpForm", "sign up form");

// Hit the sign up button (should trigger error)

casper.click("button#signUp");

// Take a screenshot of the UI component

phantomcss.screenshot("#signUpForm", "sign up form error");

// Fill in form by name attributes & submit

casper.fill("#signUpForm", {

name: "Alicia Sedlock",

email: "alicia@example.com"

}, true);

// Take a second screenshot of success state

phantomcss.screenshot("#signUpForm", "sign up form success");

});

Unlike acceptance and unit testing, visual regression testing is hard to benefit from if you’re building something new. As your UI will see rapid and drastic changes throughout the course of active development, you’ll likely save these tests for when pieces of the interface are visually complete. Therefore, visual regression tests are the last tests you should be writing.

Currently, many visual regression tools require a bit of manual effort. You may have to run your screenshot capture before you start development on your branch, or manually update baseline screenshots as you make changes to the interface.

This is simply because of the nature of development – changes to the UI may be intentional, but tests only know ‘yes, this is the same’ or ‘no, this is different’. However, if visual regressions are a pain point within your application, this approach may save your team time and effort overall, compared to constantly fixing regressions.

04. Accessibility and performance tests

As the culture and awareness around frontend testing grows, so does our ability to test various aspects of the ecosystem. Given the increased focus on accessibility and performance in our technical culture, integrating this into your testing suite helps ensure these concepts remain a priority.

If you’re having issues enforcing performance budgets or accessibility standards, this is a way to keep these requirements in the forefront of people’s minds.

Both of these checks can either be integrated into your workflow with build tools like Grunt and Gulp, or semi-manually within your terminal. For performance budgets, a tool like grunt-perfbudget gives you the ability to run your site through WebPageTest automatically within a specified task.

However, if you’re not using a task runner, you can also grab perfbudget as a standalone NPM module and run the tests manually.

Here’s what it looks like to run this through the terminal:

perfbudget --url http://www.aliciability.com --key [WebPageTest API Key] --SpeedIndex 2000 --render 400

And likewise, setting up through Grunt:

perfbudget: {

default: {

options: {

url: 'http://aliciability.com',

key: 'WebPageTest API Key',

budget: {

SpeedIndex: '2000',

render: '400'

}

}

}

}

[...]

grunt.registerTask('default', ['jshint', 'perfbudget']);The same options are available for accessibility testing. So for Pa11y, you can either run the pa11y command in your browser for output or set up a task to automate this step. In the terminal:

pa11y aliciability.com

// As a JavaScript command after NPM install

var pa11y = require('pa11y'); // require pa11y

var test = pa11y(); // get pa11y ready to set

test.run('aliciability.com', function (error, results) {

// Log our parse your results

});Most tools in these categories are fairly plug-and-play, but also give you the option to customise how the tests get run – for example, you may set them to ignore certain WCAG standards.

Next page: How to introduce testing into your workflow

Thank you for reading 5 articles this month* Join now for unlimited access

Enjoy your first month for just £1 / $1 / €1

*Read 5 free articles per month without a subscription

Join now for unlimited access

Try first month for just £1 / $1 / €1

- 1

- 2

Current page: Different types of frontend tests (and when to use them)

Next Page Embracing and enforcing a testing culture