With the latest generation of AI art generators riding roughshod over intellectual property rights, it was about time that someone created a tool that can protect artists' work. Sure enough, researchers have now developed a program that aims to prevent artists' styles from being copied.

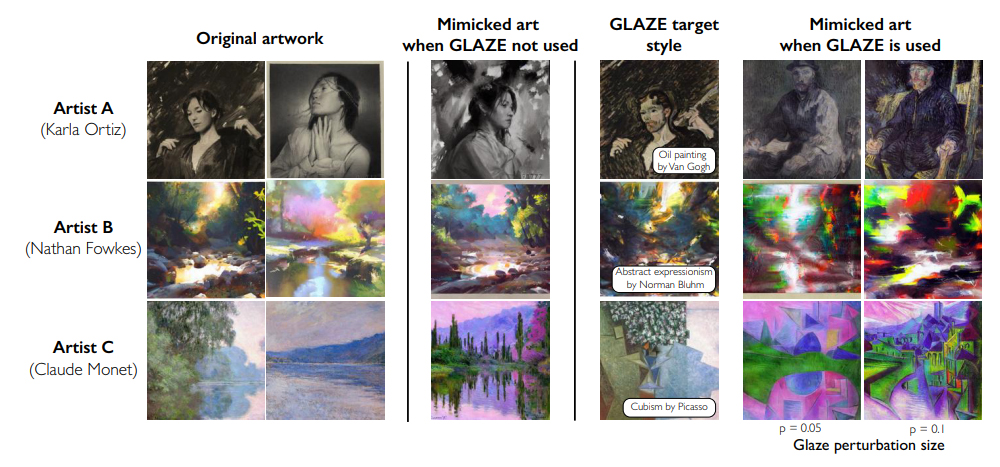

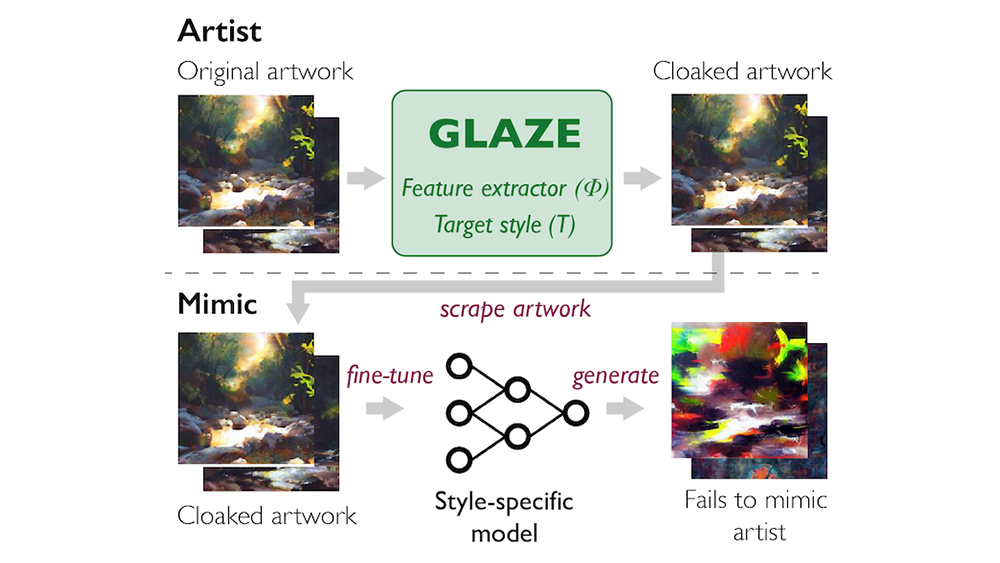

Glaze can protect images from AI art generators by applying subtle modifications. These are almost unnoticeable to the human eye but they confuse AI models and stop them from replicating the style... at least for now (see how to use DALL-E 2 if you're not up to speed on how text-to-image AI image generation works).

Many people involved in visual arts are concerned about the rise of AI image generators. Tools like DALL-E, Midjourney and Stable Diffusion allow anyone to generate an image from a simple text prompt. But they're only able to do that because they've been fed millions of existing photographs and artworks scraped from the web. If you're an artist and you have images online, chances are your work might have been used to train one of these tools, helping it learn how to replicate your own style.

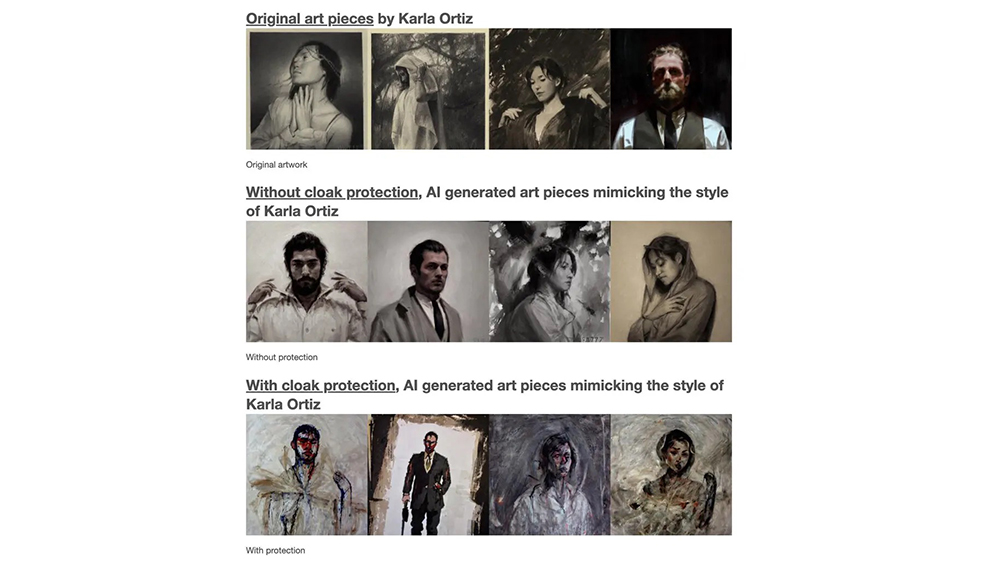

Developed by PhD students and computer science professors at the University of Chicago, Glaze now aims to help prevent that by effectively hiding the artistic style from AI. It enables artists to apply 'style cloaks' to their work before they upload it to the web. When used as training data, these "barely perceptible perturbations" mislead the generative AI model when it tries to mimic an artist's style, causing it to associate the artwork with something else.

The creators of Glaze have worked with over 1,100 professional artists to assess the efficiency and usability of the 'glazing' process. They include Karla Ortiz, an artist and illustrator who is one of three creators that have filed a lawsuit against Stability AI, Midjourney, and DeviantArt in relation to AI art generation.

The team behind Glaze says that it plans to release free Mac and Windows applications in the coming weeks. Artists will need only to download the tool onto their computer (see their full paper).

It's a development that many have been waiting for, along with tools that can identify whether an image has been generated using AI. But some are wondering how long it will work. The developers admit that AI image generation is advancing so quickly, that this won't be a permanent solution.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

It seems probable that neural networks will be developed that can identify the 'glaze' as an artefact and remove it in a similar way to how watermark removers work. This may be a first step, but it seems that we're heading into a constant race between AI that steals artists' work and AI and other tools that seek to protect it.

Read more:

Joe is a regular freelance journalist and editor at Creative Bloq. He writes news, features and buying guides and keeps track of the best equipment and software for creatives, from video editing programs to monitors and accessories. A veteran news writer and photographer, he now works as a project manager at the London and Buenos Aires-based design, production and branding agency Hermana Creatives. There he manages a team of designers, photographers and video editors who specialise in producing visual content and design assets for the hospitality sector. He also dances Argentine tango.