Adobe MAX Sneaks 2019: The most mind-boggling tech heading your way

The craziest tech experiments Adobe is currently exploring.

Adobe Sneaks is where the company reveals its most cutting-edge experiments and innovations. These are the features that haven't found their way into products yet, but are pushing the boundaries of what's possible for creative tools.

This year's show was hosted by Adobe's own Paul Trani, alongside Emmy Award-winning writer and comedian John Mulaney, and the brightest minds at Adobe. The 11 experiments we got a taster of were all truly mind-boggling.

"You're getting a preview of what might be a future keynote, and eventually right in front of you," summed up Trani in the introduction. Read on for the Sneaks we're most excited about from this year's show.

For the latest news and product announcements, check out our guide to Adobe MAX 2019. The best experiments from last year's Sneaks are now making their way into Adobe's tools – if you haven't subscribed yet, check out our guide to snagging the best Adobe Creative Cloud discount this year.

Sign up to Creative Cloud today

For Adobe's powerful suite of creative tools – including the brand new Photoshop on iPad – sign up to Adobe Creative Cloud now,

Project About Face

A tie-in with Adobe's newly announced Content Authenticity Initiative, Project About Face is a machine learning- and AI-based system that can analyse an image and tell you if and how it has been manipulated. Apply it to a photo, and it will provide you with a percentage chance that image alteration has taken place, and even provide you with a heatmap to indicate where the likely alterations have been made. Finally, and very impressively, it can approximate what the original photo may have looked like, pre-editing.

Project Image Tango

This experiment enables you to take the texture and pattern from one photo, and apply it to a different image in an intelligent way. In practice, the results are incredible. To demonstrate, the presenter took a very basic line drawing of a bird, and then applied the texture from a real-life photo of a different bird. The tech uses deep learning and AI to intelligently reshape and reform the feathers and colours from the real life bird and merge them into the form of the hand-drawn bird. The result is something entirely new, which does not exist anywhere else.

The team also showed how you could ask the tool to generate a range of different options based on the same information, to use the tech as an inspiration tool.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

Project Fantastic Fonts

This wasn't as flashy a demo, but this one could have huge implications for designers if and when it makes its way into a real-life tool. Project Fantastic Fonts opens up entirely new possibilities for manipulating typography in pretty much any way you want. The presenter showed how you could use it to go way beyond bold and italics to adjust any element of a font in a design, from the x-height to the horizontal weight.

They even applied animations, and tilted the iPad the Sneak was being demoed on to control the direction of the animation. All this on real fonts, which are live and not outlined.

Project Go Figure

This experiment in After Effects enables you to transfer motion from a reference video and apply it to an illustrated character. This is all done without the need for mo-cap markers or body suits – all you'll need is a tripod and a camera (perhaps just on your camera phone).

A feature called Body Tracker adds markers to the body and tracks them across different frames within the animation, then intelligently adds a mask around the body. You can then link those tracking points to the same point on a character design, and watch it instantly mimic the movement. Clever.

Project Light Right

This AI can alter the lighting within your pictures by enabling you to move the light source around. To use it, you need a few different images of a structure. The tool will analyse the photos, learn where the light source is and understand the geometry included in the scene.

You can then shift the lighting in your chosen final image, and Project Light Right will show you how the structure or scene will look, intelligently casting shadows on the fly. The tool can also use video, or stock images as its input to build its 3D understanding of the scene.

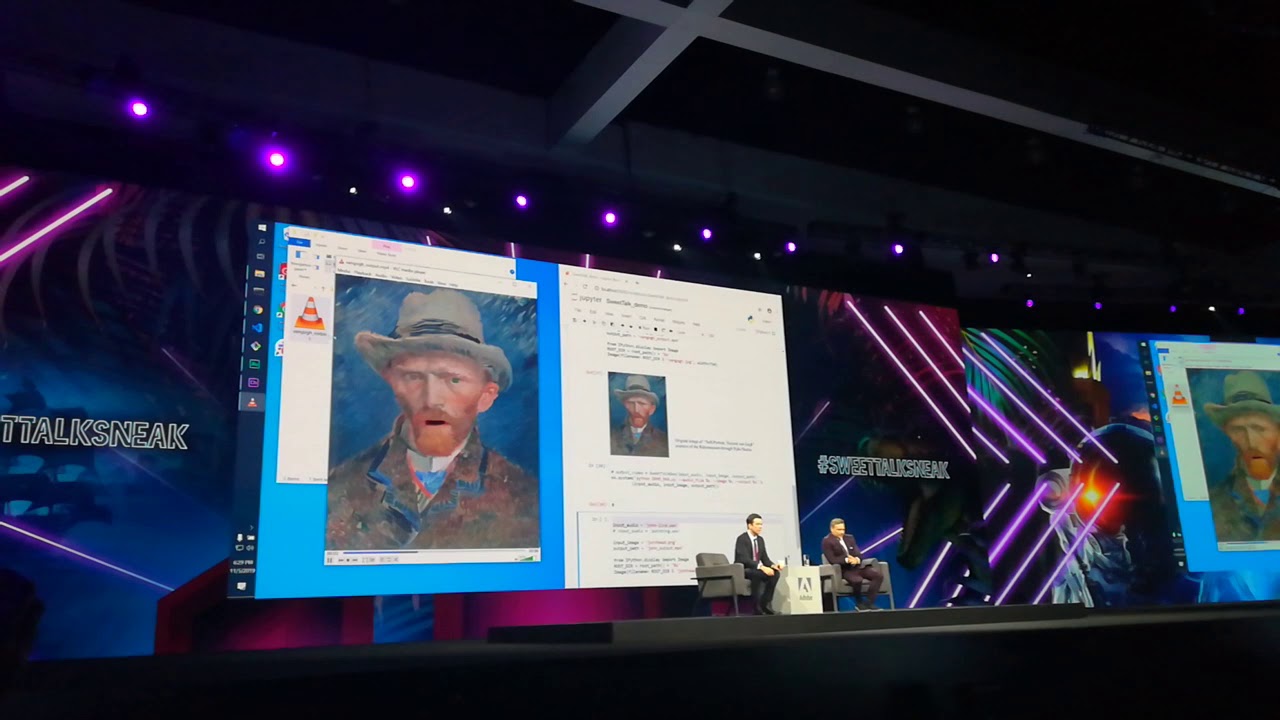

Project Sweet Talk

This Sneak reimagines how we animate with just the voice. This animation tool requires just a single static, flat image, and an audio file. The tool predicts how the face will move to create the given audio. The results of the demo were mind-boggling – the presenter used it to bring to life a crude line drawing of a cat, and then moved on to apply it to historical artworks.

Project Pronto

This experiment lets you mock up design ideas by combining AR and video editing, using AR tracking to prototype effects using your device (e.g. an iPad) as a 3D controller. The tool will record and respond to the tilting of the device, and you can use it to mock up AR interactions.

Read more:

Ruth spent a couple of years as Deputy Editor of Creative Bloq, and has also either worked on or written for almost all of the site's former and current design print titles, from Computer Arts to ImagineFX. She now spends her days reviewing small appliances as the Homes Editor at TechRadar, but still occasionally writes about design on a freelance basis in her spare time.