Can you tell how Photoshopped these celebrity magazine covers really are?

We all know that images used in ads and on magazine covers are routinely Photoshopped. However, with the exception of botched jobs that make the manipulation all too obvious, most people usually can't tell how (or how much) an image has been retouched. But it seems AI can help us with that.

AI has been the source of much controversy of late, but as well as creating manipulations of its own, it can also be trained to unearth them. A while ago, researchers trained AI to detect the use of Photoshop, and now someone's put it to the test on magazine covers featuring some of the best known celebrity beauty icons (using Adobe's image manipulation software yourself? See our pick of the best Photoshop tutorials – or see how to download Photoshop if you still need the software).

Adobe's Photoshop is so ubiquitous that it's become a generic verb used to refer to all kinds of photo editing. Almost all images are edited in some way, whether it's adjusting colours or removing a background, but the most controversial use of Photoshop is for retouching, particularly on human figures.

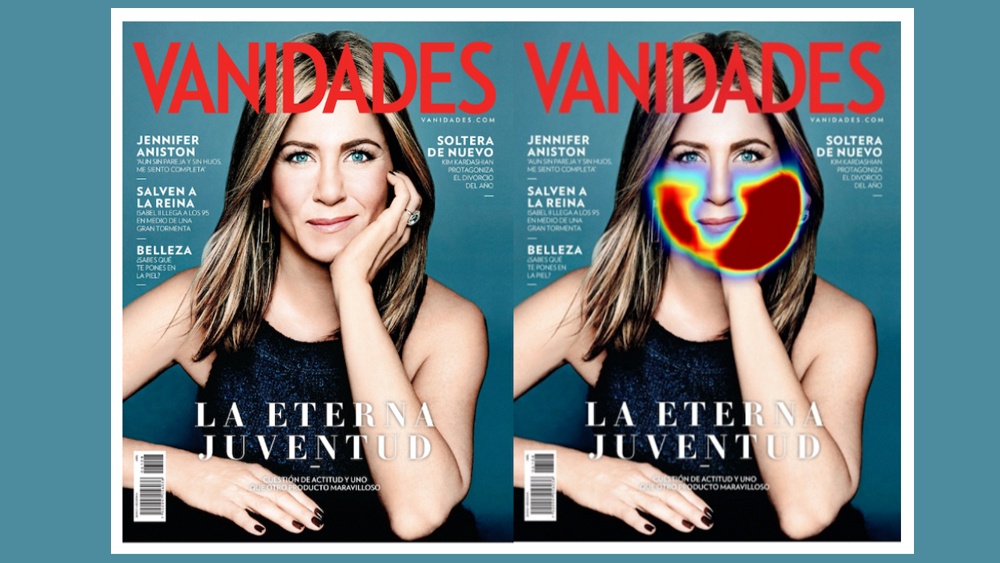

Many will take for granted that an image of a celebrity in an ad or on a magazine cover has undergone some form of manipulation to smooth skin, reduce eye circles or even slim certain features, but an AI-powered tool developed by Adobe Research itself together with UC Berkeley can show just how much, producing a heat map on the image. The more red the output, the more manipulation.

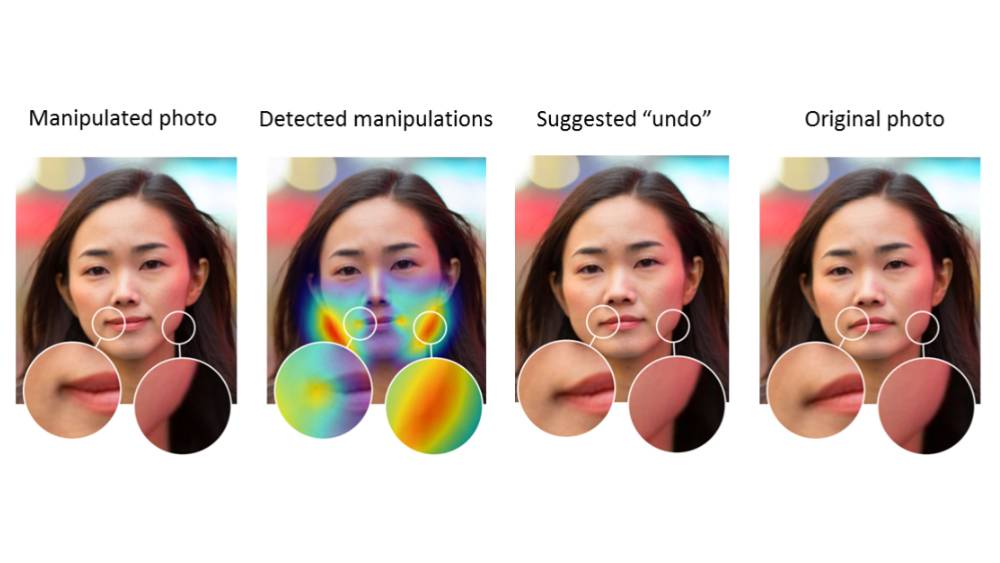

The tool has been trained specifically to detect use of one of Photoshop's most powerful features for manipulating facial features: its Face-Aware Liquify (FAL) tool. The FAL Detector uses a binary classifier AI model using a Dilated Residual Network, trained on images that were edited using the tool (see the research paper). It can even attempt to reverse the use of FAL to undo the manipulation.

To test the tool, Within Health, an online treatment service for eating disorders, put it to work on magazine covers, including 20 that featured close-up images of Jennifer Aniston. It detected clear use of Photoshop's FAL in 50% of the samples. The most frequently edited parts of Aniston's face were her jaw and chin (both manipulated in six out of 10 images), while her lower lip was altered in three images.

"Aniston has long been admired for her beauty. To many women, her features are iconic," Within says. "Sadly, the decision makers at magazines, ad agencies, and even the press regularly photoshop her look, making editorial decisions about what parts of Jennifer’s face don’t match their idea of perfect beauty."

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

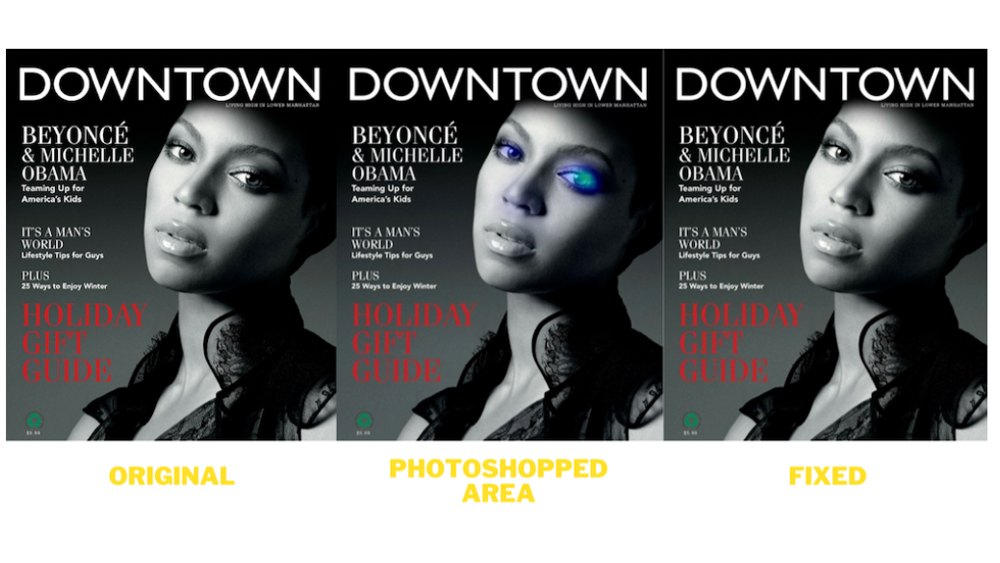

Within found similar results testing magazine covers featuring Angelina Jolie. Again, 10 out of 20 covers showed clear use of FAL, with five out of ten manipulating her jaw. Testing the tool on images of Beyoncé identified far fewer cases, but this may be partly because magazines tended to use full-body images of Queen Bey and because the AI-model powering the FAL Detector was trained using a dataset of images from Flickr, biased towards white faces. But still some examples were found.

Within notes that new tools for photo and video manipulation are appearing all the time, and it suggests that the FAL Detector is a "much-needed weapon in the fight against the increasingly unrealistic portrayals of beauty". It believes that seeing how images are manipulated can help us to understand the motives and goals of the manipulation, improving media and content literacy.

While this test used an AI model trained to detect the use of one specific Photoshop tool, tools could be created to detect the use of other techniques. The development is also of interest amid the huge advances in text-to-image AI image generation and deepfakes. Many have suggested that with AI-created images becoming harder to spot, we urgently need tools that can detect whether an image is real, edited or an AI-generated fabrication.

FAL Detector is available on Github.

Read more:

Joe is a regular freelance journalist and editor at Creative Bloq. He writes news, features and buying guides and keeps track of the best equipment and software for creatives, from video editing programs to monitors and accessories. A veteran news writer and photographer, he now works as a project manager at the London and Buenos Aires-based design, production and branding agency Hermana Creatives. There he manages a team of designers, photographers and video editors who specialise in producing visual content and design assets for the hospitality sector. He also dances Argentine tango.