Plane crazy: behind the scenes of Robert Zemeckis movie Flight

Atomic Fiction co-founder Kevin Baillie tells Mark Ramshaw how his studio reached new heights in the sobering drama Flight.

There are many reasons to take note of Robert Zemeckis' latest. Flight marks the director's return to live action following a pioneering period exploring the world of performance capture. It sees star Denzel Washington in rare anti-hero mode. And it's a critical darling (recently gaining two Oscar nominations) that also packs a powerful visual effects punch. Thanks to the latter element - which recently earned a VES award - it's also a movie that puts pioneering newcomer Atomic Fiction firmly on the map.

"We'd done a few projects before - including shots for Transformers: Dark Side of the Moon, Underworld: Awakening, and the last series of Boardwalk Empire - but this is the largest project to date, and the first in which we were the primary (and in fact only) vendor," says studio co-founder and VFX supervisor Kevin Baillie.

We'd done a few projects before - including shots for Transformers - but this is the largest to date

Bailie and co-founder Ryan Tudhope had previously worked together at The Orphanage and then at Zemeckis' ImageMovers. "When Disney shut the door there, we figured we could just go work for somebody else, or take the amazing lessons we'd learned and philosophies developed to start our own thing," says Baillie.

Central to the studio’s forward-thinking business plan was the idea of cloud-based rendering. Baillie says they opted to take this relatively new and unusual path in order to facilitate a talent-based studio structure: "Talented people are - deservedly - expensive. Skimping on that side of things would leave us dead before we even started, so we had to look at other ways to save money.

"That led us to cloud rendering, not only because of the obvious savings, but also because it meant we could ensure the artists would always have the resources needed to get the job done. It's not every day a chance to give artists more while spending less comes along."

Talented people are expensive, so we had to look at other ways to save money

While the use of the cloud was vital to handling the work on Flight, Baillie admits they expected resistance from movie studio Paramount. "We went into the first meeting with our bulletproof armour on," he laughs. "We had all our relevant information prepared, including all the data about security. But then the first thing they said was how excited they were about cloud rendering and its cost-effectiveness. They were able to look beyond the obvious panic call, study the realities and make an educated decision."

Building the aircraft

A key challenge on the project was to find a way to depict a fully working airport - not an easy task in security-sensitive, post 9/11 America. "We searched high and low without finding anything that looked right, until we made a contact over at Orlando International," says Baillie. "We were able to drive around the entire place, shooting enough reference material to ultimately build something very faithful to the overall layout and feel of the place."

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

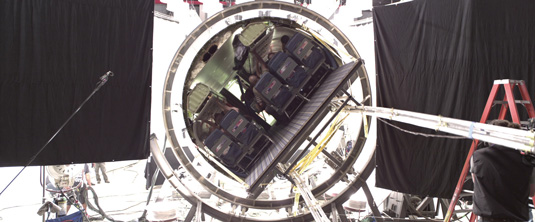

With the film depicting the flight and pivotal crash landing of the passenger plane piloted by Denzel Washington's character, it was also necessary to build a completely photo-real digital aircraft - one that crucially wasn't closely modelled on anything produced by the likes of Boeing.

We spent around six months getting to a final plane design

"Doug Chiang was brought on in the early stages to design the aircraft inside and out," says Baillie. "From that, one of our modellers built a first design pass, which we then spent around six months getting to a final design, tricking it out in 3D elements in a big way. Every armature, hydraulic element, flap and rivet needed to be modelled, so that we could create a believable amount of vibration and violent shaking. It would have been possible to cheat with camera shakes, but it's the complexity you get from all the components rattling and bending that sells it."

The crash

For the crash, the team looked at crashes in movies including Cast Away and Alive, but Baillie says this particular one was ultimately designed to be more matter-of-fact, and so hard-hitting.

"It's a much more realistic approach, with the audience witnessing much of it from within the aeroplane. The personal aspect is what makes it so much more terrifying."

To create the views out of the cockpit in mid-descent interior shots, Atomic Fiction utilised a mixture of digital elements and live footage. For the latter, they took to the skies in a helicopter rigged with a custom camera setup consisting of three Red Epics, using these to stitch together panoramic footage.

The personal aspectis what makes it so much more terrifying

The crash ends with a shot inside the cockpit. "We put Denzel in a real pilot's chair against green screen, and shot with a camera over-cranked to 120 frames a second," says Baillie. "We then used extensive photo reference from the live cockpit to build and light a digital version that crumples all around him."

If Washington found acting against green screen awkward, Bailie says he never gave them a hard time for it. "He's such a fantastic actor that he was able to instantly switch on and put himself into the head of his character. Really, actors can make or break our work. No matter what you do, without a convincing performance up there, the effects will never look believable."

Without a convincing performance, the effects will never look believable

For the final part of the crash, a range of solutions was used to generate the smoke, fire, dirt and collision debris effects. While the cloud was vital for rendering of frames, Baillie says that they opted to use a local render farm for simulation. "That's one area where we're not doing it in the cloud quite yet, simply because of transfer times."

Baillie views Flight as a particularly important project for Atomic Fiction in this early stage of its life: "Carrying a show with 395 visual effects shots has obviously put us on the map. But we got the chance to get involved with a movie that has really affected people and might even be of help to some. In our line of work, that's pretty rare. The sense of satisfaction is pretty amazing."

This article originally appeared in 3D World magazine

Liked this? Read these!

- Best 3D movies of 2013

- Top free 3D models

- Cinema 4D tutorials: projects to up your 3D skills

The Creative Bloq team is made up of a group of art and design enthusiasts, and has changed and evolved since Creative Bloq began back in 2012. The current website team consists of eight full-time members of staff: Editor Georgia Coggan, Deputy Editor Rosie Hilder, Ecommerce Editor Beren Neale, Senior News Editor Daniel Piper, Editor, Digital Art and 3D Ian Dean, Tech Reviews Editor Erlingur Einarsson, Ecommerce Writer Beth Nicholls and Staff Writer Natalie Fear, as well as a roster of freelancers from around the world. The ImagineFX magazine team also pitch in, ensuring that content from leading digital art publication ImagineFX is represented on Creative Bloq.