Revealed: the future of cinematic storytelling in video games

Video game artists from leading studios give clues to the future of video game cutscenes and more.

Of the many graphical sophistications that have become staple parts of modern video game design, it's fair to say that the sheer excellence demonstrated in cinematic cutscenes is not given anywhere near the same prominence as the in-game graphics engines that make gameplay so immersive.

At its most primitive level, the cutscene is a narrative device designed to flesh out the game world beyond what the player can control, often serving to advance a plot forward, chart character development or highlight prominent thematic details.

"A cinematic is the walkway between a film and the game," suggests Franck Lambertz, VFX supervisor at MPC. "It should be a moment to get the player into the right mood for the game."

Article continues belowUntil the dawn of 32-bit processing power in the mid-'90s, the hardware restrictions of early home consoles meant that effective storytelling was often limited to a core set of narrative tools, with dialogue boxes and inventive sound design playing a huge part in defining atmosphere.

A cinematic is the walkway between a film and the game - a moment to get the player into the right mood

Eventually, the 3D rendering capabilities of consoles like the Sony PlayStation and the Sega Saturn gave developers a chance to tap into the language of cinema, with the goal of capturing a sense of reality high on the graphical agenda.

As in-game engines became more sophisticated, so did the attention to cinematic detail. The only real downside was that for many gamers, the more polished the cutscene, the bigger the fall would be when making the transition back into gameplay. If only the rest of the game looked as good...

It’s a different story these days. Video game cinematics have become so much more complex, and we now find ourselves watching exquisitely crafted scenes merging seamlessly with in-game graphics. And with the next generation of console hardware now upon us, it’s safe to say that over the lifespan of the PlayStation 4 and the Xbox One, the boundaries of cinematic possibilities are sure to reach even greater heights.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

The language of cinema

"If you create compelling characters and a fun world for them to be in, players are not going to mind having control taken away from them briefly while the rest of the story plays out," says Josh Scherr, veteran lead cinematics animator at Naughty Dog. "That's what we want to do at Naughty Dog - make cinematics that people don't want to skip."

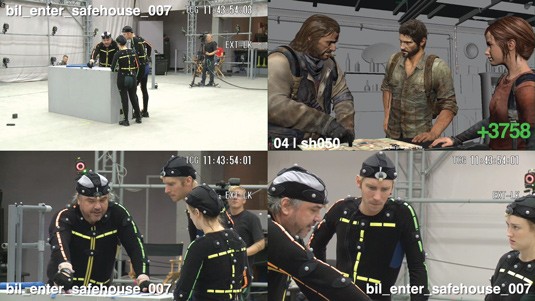

While storytelling has always been at the heart of Naughty Dog's creative ethos, the tense and hugely emotive video game The Last of Us perhaps best demonstrated the studio's commitment to creating narrative-driven titles that fully embrace the nature of cinema in order to strengthen player immersion and connection to the story.

"One of the big things we did on The Last of Us in particular was pay attention to the transitions between cutscene, in-game character and gameplay," says Shaun Escayg, lead cinematics animator. Together with fellow cinematic leads Josh Scherr and David Lam, the cinematics team spent the last third of the production time trying to seamlessly stitch together the transitions from simultaneous camera, animation and overall direction standpoints.

"If you want to keep players immersed, the quality of both the writing and the actor's performances must be consistently high," continues Scherr. In order to make the gritty cinematics of The Last of Us stand above the norm, the virtual performances of the story's cast had to be just as believable as a Hollywood production, which meant taking lessons from films like The Road, Children of Men and I Am Legend, along with an injection of art-house flair from documentaries.

Similarly, for creative agencies like MPC, which collaborated with Ubisoft on its incredible cinematic for Assassin's Creed IV: Black Flag, translating the language of cinema across to the world of gaming is a natural progression. "Video games and cinema operate in different media, but they both work in their own right," says Franck Lambertz, who feels that the emotional aspects of both disciplines are what make them such a dynamic pairing. "It's great when the two mediums meet and morph their unique attributes into a new entity."

For studios such as MPC, Axis Animation and Platige Image, establishing a solid connection between video game developers and cinematic specialists is essential in communicating the right kinds of mood.

"Working on the cinematic trailer for The Witcher 3, it actually already means that you have a good relationship with the developer. We have known the teams at CD Projekt RED for many years, and it's much deeper than a typical client/vendor relationship," says Tomek Baginski of Platige Image, and director of the striking The Witcher 3: Wild Hunt cinematic. "Every project we receive from CD Projekt RED is demanding, but also allows us to stay creative in what we do. It's a relationship based on trust, and I think that makes us solid partners."

Realistic storytelling

When it comes to creating a realistic scene, one of the major aspects of production is having a good source of reference material to help ground the animation within a believable frame. Even reality can be heightened in terms of character animation, as Naughty Dog's David Lam explains. "Realism is subtle, but it can be exaggerated. Knowing how to exaggerate it is the key thing. If it goes too far it can be a bit too cartoony, so understanding the broad ranges from subtlety to exaggeration is very critical."

For Naughty Dog, motion capture and actor performance are vital in creating cinematics that resonate with players, and for laying the groundwork that animators will build on. Back in 2006, the development team behind Uncharted decided to cast the same actors to provide both the voice and physical performance of their characters through motion capture - an unusual approach at the time.

"It makes a huge difference to the quality of the performances. What's more, instead of recording everyone separately you can record everyone together on the stage, just like you would when shooting a film. You get a particular kind of chemistry and electricity when people are actually interacting with each other that you never would if you're just recording someone separately in a sound booth."

3D character artist and digital sculptor Mariano Steiner, however, believes anatomy to be the key to realism. "From my point of view, dictating the level of realism is all about anatomy - you can be very precise and get a realistic model, or you can distort and play with forms to get a more stylised feel."

Benjamin Flynn, senior character artist at Rocksteady Studios, warns that interpreted style is still crucial, and requires a careful balance: "The Arkham series has such an iconic style, so we do have to balance between the two rather carefully. Even with high-resolution maps and meshes it's still important for us to get a handcrafted feel. No photo reference or scans are allowed to be used, as that would detract from the artistry we want to purvey in the characters as well as the world at large."

Skills and software

While effective storytelling is essential, the technological feats that run alongside a good concept, script and cast are not to be underestimated. ZBrush is a popular choice for most professionals, and Irrational Games's Gavin Goulden, who worked on Bioshock Infinite, is quick to sing the software's praises: "I really cannot imagine my day-to-day work without ZBrush. I believe it has become such an essential part of most character artists' workflow that it's hard to imagine creating game art without it. It has become vital to our industry."

Of course, modelling is but one aspect of production, and Richard Scott of Axis Animation explains how Axis combined all the various tools at its disposal in order to produce the stunning debut trailer for upcoming Xbox One title Fable Legends.

"We put the Fable Legends project through our standardised pipeline, which involves Maya, modo and ZBrush for asset creation, Maya for rigging and animation and Houdini for visual effects, lighting and rendering. Composition is handled in Digital Fusion, and we have our own tools to link these packages together."

It's a complex process that involves a lot of planning, as Scott explains: "We invest a lot of time in pre-production and during that process Ben [Hibon, director] completed storyboarding and 2D animatics while our team was working on concept art for the key elements, as well as a colour script defining the look of all the shots."

If that wasn't enough, the team still has to build various assets, and implement procedural rigging tools in Maya to give the animators the control they need. "We then shot motion capture at the Imaginarium, with Ben on set directing the actors. Once we have the first motion-capture data, we begin to go into the layout phase, adding the data to proxy characters in a proxy environment and working on both the camera work and edit."

This motion-capture data was then used by the animation team, where they pushed it further via keyframe animation and gave it that wonderful stylised feel that you can see in the final trailer.

From very early on in the project, the VFX team at Axis research and develop the key effects required, and once the animation is in progress they can begin to integrate the VFX into the final shots. "At the same time," Scott adds, "our lighting leads are doing lighting setups for the key scenes; these setups are then given to the rest of the lighting team so they can work on a series of shots. Our lighting team also composite all their own shots in Digital Fusion and work closely with the guys doing the digital matte paintings."

Naturally, the more visually ambitious the project, the more technical challenges there will be. Along with the headaches involved with coding and manual programming on The Last of Us, quality consistency was of paramount interest to Escayg, Lam and Scherr.

"From a broad production sense, one of the biggest technical challenges was managing the quality in the time available and with the amount of footage," says Escayg - when you consider that the cinematics taken from the game equate to a feature-length movie of over 90 minutes, you can imagine how sticking to a tight production schedule would be hard to achieve.

For Lam, one of the largest challenges was getting everything that was created in Maya to match with in-game material. "At Naughty Dog, we spend a great deal of time outside Maya and dealing with the game engine. It's important to understand how to integrate scenes, how to work within the game engine, as well as the game features underneath the hood, which can change a lot."

Textures is one area that sees huge improvements in each new generation of hardware. Rocksteady Studios is one company that is well-versed in dealing with detailed textures, as Flynn explains. "We are very practised at saving on textures and UV while creating complex, but stable shader systems. You can do a lot with little if you think about key areas of a character versus unseen or repeated detail. A tip would be to use the space you save mirroring areas to include other parts that you would otherwise not have space for; or just increase the pixel depth of current elements."

For 3D artists like Mariano, achieving realistic texture effects relies on a lot of experimentation and cross-rendering. "I'd say my process is 60 per cent ZBrush and 40 per cent Photoshop. It's all a mix of brushes, alpha techniques and texture compositions. Plus having the patience to test all that again and again, until it looks the way you want."

So what can we expect to see from the next wave of consoles? "The one thing we're discussing is doing 100 per cent real-time cutscenes," says Naughty Dog’s Josh Scherr. "There are obvious advantages to

that - the transitions between gameplay and cinematics will be that much smoother, and we'll also be able to do things like persistent props and clothing, so that if a character is carrying a shotgun in a player-controlled sequence, they will be carrying a shotgun in the cutscene, too."

Capturing every nuance

Likewise, new developments in performance capture will ease the ambitions of studios as they enter the next-generation of video-game cinematics. Remedy has licensed Dimensional Imaging's DI4D facial performance technology for its Xbox One video game Quantum Break - a technology that can derive very high-definition facial motion capture from an array of nine standard video cameras, without markers, makeup or special illumination.

"Quantum Break is a hugely ambitious project that combines action and narrative components to bring the characters to life. The only way to achieve the high quality of performance was to create highly realistic digital doubles of talented actors," says Sam Lake, creative director of Remedy. "By using Dimensional Imaging's DI4D facial performance capture solution combined with Remedy's Northlight storytelling technology we can ensure that every nuance of the actors' performances are captured on screen."

Perhaps, then, the question should be, just how far will the next generation of technology take us? The debate rages on between developers - Richard Scott and Axis Animation clearly believe that the future has already arrived.

"The new consoles and advances in video game technology have already changed the way we do things. We are working on more projects that involve real-time game engines as the rendering solution. Throughout 2014 we are embarking on an R&D plan to allow us to integrate even more tightly with our clients' real-time game engine pipelines," Scott enthuses.

"We have also just set up axisVFX, a boutique visual effects studio founded by Axis alongside three industry experienced VFX supervisors and VFX producers. Growing the visual effects part of our business is a big goal for us in 2014."

However Platige Image's Tomek argues that we should keep sight of the creative energy behind the technology. "What will still be important is the story - what the creator has to say. Creativity will have fewer limitations. In this sense, I don't think the new generation consoles will dramatically change the way we work.

"I consider myself a filmmaker of sorts, and in that sense the storytelling rules don't change simply because of the tools we use. I don’t think this generation will see all games solely using real-time engines - it's still too early for such a change. But without a doubt, it will happen one day."

Words: Nicola Henderson

This article originally appeared in 3D World issue 177.

The Creative Bloq team is made up of a group of art and design enthusiasts, and has changed and evolved since Creative Bloq began back in 2012. The current website team consists of eight full-time members of staff: Editor Georgia Coggan, Deputy Editor Rosie Hilder, Ecommerce Editor Beren Neale, Senior News Editor Daniel Piper, Editor, Digital Art and 3D Ian Dean, Tech Reviews Editor Erlingur Einarsson, Ecommerce Writer Beth Nicholls and Staff Writer Natalie Fear, as well as a roster of freelancers from around the world. The ImagineFX magazine team also pitch in, ensuring that content from leading digital art publication ImagineFX is represented on Creative Bloq.