Amazing AI tool reconstructs photos like magic

You can't do this with Photoshop's Content-Aware Fill.

If you're retouching photographs and want to cover up small blemishes or remove unwanted details, Photoshop's Content-Aware Fill can be a life-saver. It's perfect for patching up small areas of images, but if you try it on larger areas the results are guaranteed to turn out looking fairly weird.

However, a team of researchers from Nvidia is working on a technique that makes it possible to realistically fill huge gaps in photographs, without the results looking like a genetic experiment gone badly wrong.

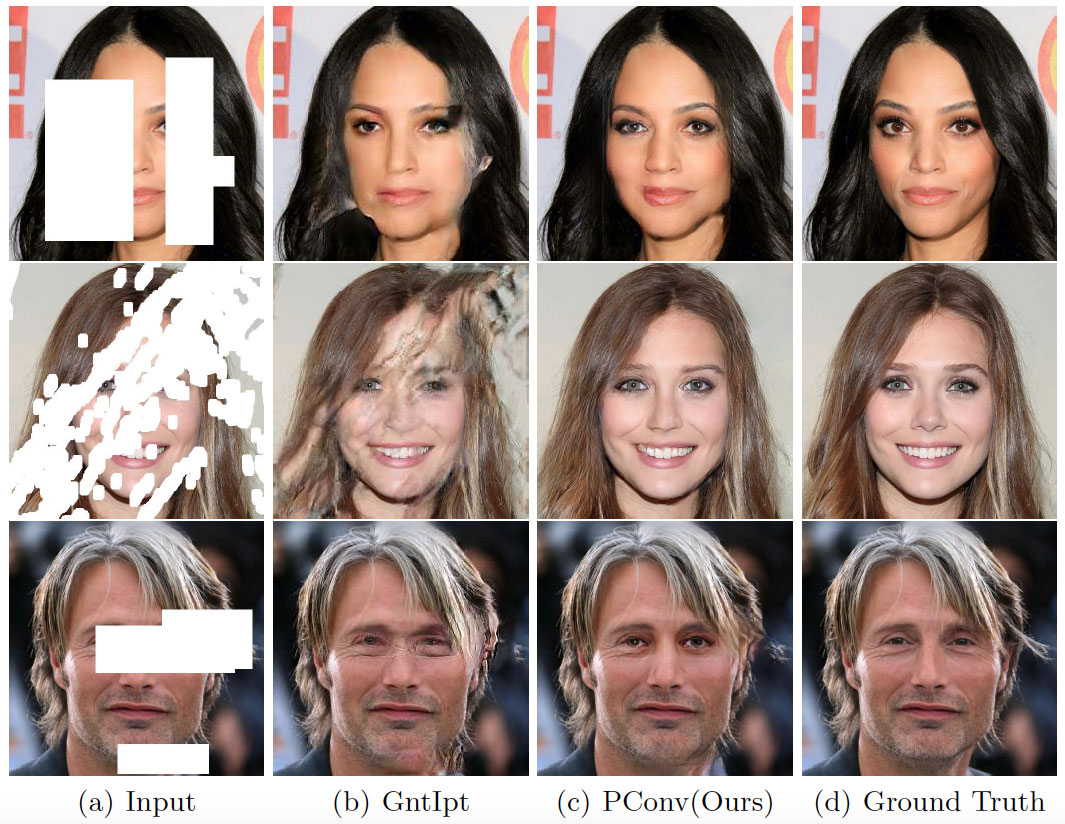

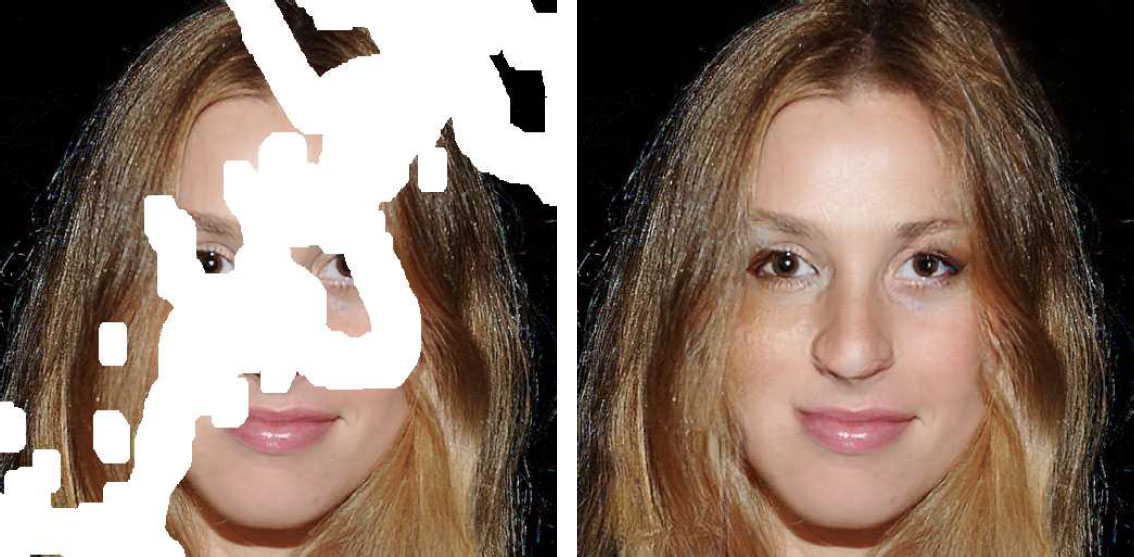

The technique's called 'image inpainting', and it uses a state-of-the-art deep learning method to edit photos by removing content and filling in the gaps, and reconstruct images that are badly corrupted with holes or missing pixels.

Article continues belowThe team used high-end Nvidia Tesla V100 GPUs to train a neural network with over 55,000 randomly generated masks of streaks and holes that were applied to images from the ImageNet, Places2 and CelebA-HQ datasets, so that the neural network would learn to reconstruct the missing pixels. The team then used a different set of nearly 25,000 masks to test the network's reconstruction accuracy.

We're not going to pretend to understand how it all works, but the results speak for themselves. The image inpainting technique is capable of filling in huge gaps – even with really difficult subjects such as human faces or complex landscapes – and doing it in such a way that the edits don't stick out like a sore thumb. Look closely, of course, and you can see the join, but the overall effect is nowhere near as jarring as other content-aware techniques.

The demo video uses fairly low-resolution images, but researchers say that their technique can scale up to handle super-resolution tasks as well. Don't expect to see it in Photoshop any time soon – it currently relies on extremely powerful and expensive deep learning-focused hardware that you won't see outside of a lab. But give it a few years and you should be able to rescue even the most battered of old snaps with a simple one-click fix.

To find out more, read Nvidia's report on its image inpainting technique, while if you're feeling clever you can read read the original – and highly technical – research paper.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

Related articles:

Jim McCauley is a writer, performer and cat-wrangler who started writing professionally way back in 1995 on PC Format magazine, and has been covering technology-related subjects ever since, whether it's hardware, software or videogames. A chance call in 2005 led to Jim taking charge of Computer Arts' website and developing an interest in the world of graphic design, and eventually led to a move over to the freshly-launched Creative Bloq in 2012. Jim now works as a freelance writer for sites including Creative Bloq, T3 and PetsRadar, specialising in design, technology, wellness and cats, while doing the occasional pantomime and street performance in Bath and designing posters for a local drama group on the side.