“Creativity is innately human” says Adobe exec, explaining how AI can empower creatives of all levels

I sat down with Deepa Subramaniam, VP of Creative Cloud Product Marketing, at Adobe MAX.

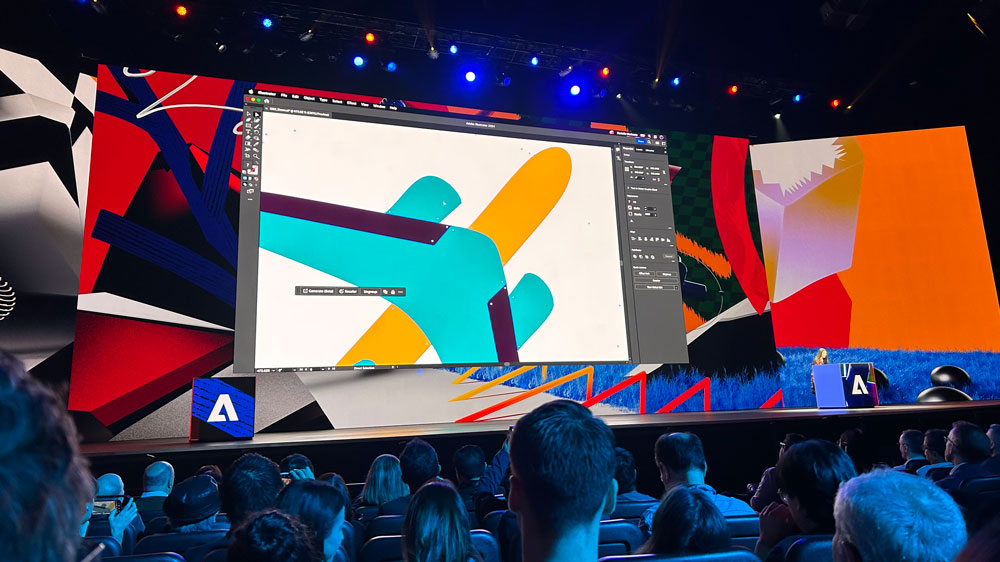

I was at Adobe Max this year, where the focus was on the total Firefly rollout across the Creative Cloud suite, and how this can enable creatives to keep up with the demand the digital economy has for new content. With Illustrator, Premiere Pro and Express gaining varying AI tools, the mood was jubilant – but creatives are, understandably, unsettled by the implications of generative AI. Adobe, though, is on a mission to "empower creatives".

After the keynote, I sat down with Deepa Subramaniam, VP of Creative Cloud Product Marketing at Adobe to discuss all things AI. We talked about Adobe's confident approach to the game-changing innovation, the impact on creatives and creative work, and how AI isn't new for Adobe.

For a full picture of the developments, see my posts on the Illustrator announcements, Premiere Pro announcements, and my overall response to Adobe Max this year.

Do you have a favourite of the innovations announced today?

It's pretty tough to choose a favourite out of everything announced today. I am really excited about Text-to-Vector in Illustrator built on the Firefly vector model, which is one of the one of the three new foundational models that we announced today. I think Text-to-Vector is going to bring vector artwork and the power of vector to a huge new audience.

Now in Illustrator, you can use Text-to-Vector to create icons, patterns or whole scenes and get the power of Illustrator where that the vector artwork is grouped properly and is easy to edit. It's just huge – I can do basic things in Illustrator but now I can do so much more just by using text vector. So I think that that's really exciting and shows how we continue to bring new models into the family of Firefly and bring generative AI and generative editing capabilities to more mediums and modalities [such as] we're working on text to audio, text and video, text and 3d models. So it's just a very exciting time.

How do you see these these functionalities being used differently from kind of the everyday user and creative professionals?

Even today in the keynote we saw that Anna is a very seasoned, experienced creative professional. She said she's been using Photoshop for 20+ years and currently knows Photoshop in and out. And so she's using Firefly capabilities to supercharge her ideation process. She showed those sketches and she was using Firefly to ideate on a demo, and then to boost parts of a workflow. Tedious selection she'd have to make [before], now she can easily make that selection and use the filter to add new imagery or remove imagery.

So, creative professionals are going to be able to use the generative capabilities we fold into the creative applications like Photoshop and Illustrator to really get rid of those mundane tasks and help their ideation and their end to end creative production process.

Now take someone who's maybe newer to creativity, and to design, who is playing around with the Firefly capabilities and Express – maybe they're a digital marketer or social media manager. Now they can use something like a template, which was shown today or Express now has a huge new generated fill feature – so they can use that to create new imagery.

What's exciting is the breadth of use cases that we're unlocking with generative AI editing, fine tuned by a creative professional using a creative professional application like Illustrator, all the way to a designer or creator of any skill level who can create any visual graphical content. So I think that breadth is very unique to what Adobe brings to the market.

Given a consumer is unlikely to know where the differences lie with the end product, does AI lessen the value of the skill needed to start creating from scratch?

Creativity and how you bring that creative expression to life is so innately human. Anna started working in Photoshop and used generative expand to show she clearly had a vision in her mind. We saw there were sketches that she had a picture of her mind's eye, and she could have spent the time doing selections and the compositing or the layering, to bring that to life in Photoshop without the assistance of generative capabilities. She chose to use those generative capabilities to save some time but ultimately what she created was still a bespoke creation. It just happened that generative editing helped her get to that output and take what was in her mind's eye and make it a reality faster.

Time and time again, that's what we're seeing since the day that Firefly launched back in March. [The AI tools are] being used to get up and running faster, gets some ideas out on paper, start that ideation and then help you in that creative process. But it's not fully replacing the human grader who has that idea in their minds, whether it's a professional or not, it's just helping them get started faster.

And even going back to the feature that I'm most excited about – Text-to-Vector, it's the same thing. It's like a starting point for a creator on their journey to create whatever's in their mind's eye and a faster way to achieve that starting point. Whereas as an illustrator, you might have the blank canvas, start creating your vector artwork by hand now you can at least get up and running with Text-to-Vector but you're still going to be using the power of the canvas of Illustrator and all those tools that are available to you to further it and make exactly the output that you want. That's what's so powerful about how we're bringing Firefly directly into the workflows and applications creators use every day.

Do you see this generative capability being functional across every part of Creative Cloud?

I think that remains to be seen. What's been so exciting about the process of beta, and Firefly as this public beta in March, is seeing how the community uses this innovation, and how they fold it into their workflow. So we have an intent – we understand our users, we understand our applications, and we're also listening and learning and learning from our community [regarding] how they use it and responding to that. So where are their limits? Where's there more friction that can be solved with new workflows?

That's the beauty of this whole process. You know, I love that Ashley mentioned how Generative Recolor, which is a capability we added in Illustrator in June – basically the generative version of a manual feature. Again, it's a time saving feature. We started seeing that people were using generative recolor to kind of get up and running but they were still fine tuning it with other features.

So we brought a new workflow based on what we started seeing people doing out in the wild. They did the feature and so we did the UX work to package that up as a more contained workflow directly in the same panel. So I think that's where there's so much synchronicity between this process, we're putting innovation out there when it's ready, seeing how the community is using it responding to that this is like iterative, sort of copacetic process between the two. It's reinforcing the workflows that we bought, the generative capabilities we're bringing to life but also introducing whole new workflows, which is really powerful.

How does the process work when developing in conversation with the creative community?

We have deep touch points into communities. Our pre-release programmes are [related to] features that are incubated at private beta. We get rapid fire, feedback and incrementally iterate on the feature through that private pre-release mechanism. And then there becomes a point where we feel like the innovation is ready enough – it passes all of our strict checks around ethics and, harm and bias and is ready to come into life in a public beta fashion to get broader feedback.

That's been the process that we take into the public beta, which is when we get a lot more feedback from an awesome group of users. And again, we pay attention to that [feedback], respond, engage, and then continue to deal with that feature.

What has made Adobe so sure that they are able to trailblaze this path in such a forward thinking way?

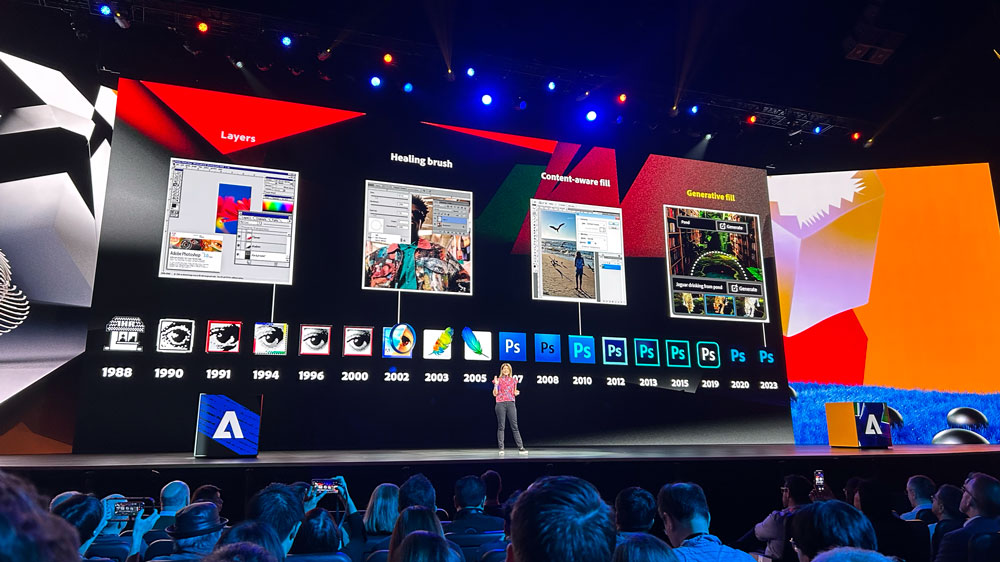

AI has been in Adobe's blood since forever. It's not as if our innovation recently is new – we've been innovating on AI for decades. And fundamentally, I think it comes down to the fact that we are a very mission driven company. Our mission is to empower creativity for all, and AI can really help boost that mission in a way that is fundamentally different. It's almost fundamental to who we are to explore that and do that thoughtfully at Adobe. We want to be open, transparent, empathetic and kind and so it's a really exciting journey that we're on. Again, it's so innate to our mission, which is to empower creatives.

What has tipped the balance into AI being such a concern?

It's just very junior right now. Anything that has the potential to change the status quo brings excitement, sometimes fears and it's just all part of the process. Photography came in and declared the death of painting, digital photography came in and photography purists were like "what this is?", but it really isn't how it plays out.

New thinking, new ways of creating new outputs all launch together to create something new. That's the moment that we're in now and it's so early. But the act of creating is so fundamental to who we are as people. It's just too amazing of an opportunity to explore and play in, and Adobe has similar values to the way we operate. Let's do this in a way that is 'Adobe', – so that's thoughtful, open, transparent. It's all about empowering.

So I have to say it's a very exciting time for a company internally too. It's like kids in a candy store. It's so fun to play with this. And we're all creators ourselves at Adobe.

Is the future limited to text-prompt AI?

The space is so fast changing and exciting. An academic paper written around generative AI three months ago is completely considered old news. It speaks to the the volume of innovation and interest. This is once in a generation good, creative disruption.

Right now, we're heavily invested in text based generation. So using a text prompt to create, again, output in different mediums, whether it's images or vector or video or audio. We're exploring a lot of different things.

I think the interfaces that we use are going to increasingly become more and more conversational. We're definitely going to have a massive team internally of researchers and people paying attention and exploring, and I think that's what we look at to see how to incubate new ideas that can help our users whether they're creative professionals, communicators, creators of all skill levels, who want to be able to keep innovating and helping them create and create easily.

For a full overview of the MAX announcements, see our MAX 2023 development roundup, and for what's coming in the future, see the most exciting 4 Sneaks presented at MAX. And to sign up for Creative Cloud, see the deals we've found below:

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

Georgia has worked on Creative Bloq since 2018, and has been the site's Editor since 2023. With a specialism in branding and design, Georgia is also Programme Director of CB's award scheme – the Brand Impact Awards. As well as immersing herself with the industry through attending events like Adobe Max and the D&AD Awards and steering the site's content streams, Georgia has an eye on new commercial opportunities and ensuring they reflect the needs and interests of creatives.