SEO for startups

Tom Gullen presents a primer on SEO (not only) for startups and explains common misconceptions and mistakes as well as the importance of accessibility

SEO is an industry that sparks frequent heated debate and passionate responses. It’s an industry that is often misunderstood and even dismissed. Yet for startups a basic SEO foundation and understanding of it is likely to be of crucial importance, and can really help them on their path to success.

So how do we go about beginning to optimise our startup’s website for search engines? Accessibility should be a primary concern for websites not only because it makes your website accessible for less able people, but also because a search crawler bot should be considered your least able user. Developing your website in a highly accessible manner comes with the additional benefit of making your website highly accessible for search engine crawlers.

Basic accessibility for websites isn’t difficult to achieve.

Accessibility and SEO

Accessibility with Dynamic Content

Disabled JavaScript is not exclusively the domain of archaic browsers; plug-ins such as ‘NoScript’ have millions of users. The Google crawler is able to execute some JavaScript when crawling. However, it's a risky proposition to rely on this if your content is inaccessible to agents that have disabled JavaScript.

When developing a website I firmly believe that ‘progressive enhancement’ is an essential principle that should be adhered to. If, for example, we are building an online store for music CDs, we would first build it without any JavaScript. A user will click on an artist and a new page will load. Once the site functions and displays perfectly in this manner, we can then add layers on top, such as dynamic content loading with Ajax.

This has two advantages. If your JavaScript fails, there’s a good chance the site will still function and display properly – but most importantly you’ve created a highly accessible website. Although you're using the latest new techniques you haven’t sacrificed accessibility in the process and as a result crawlers are free to roam your website.

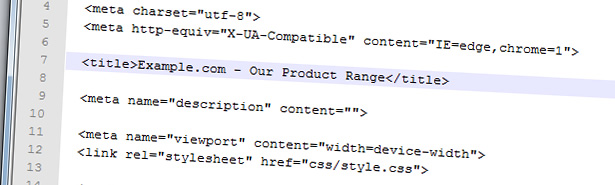

Page title

Page titles are an extremely important part of your page, because they are very frequently relayed in search engine results and carry weight in search engine ranking algorithms. It’s important to keep the title as concise and contextually rich as possible: 65 characters is a good rule of thumb.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

A frequent mistake is to incorrectly format the title by placing the name of the website at the start of the title tag.

It’s highly recommended to place the website name at the end of the title – in a search engine result the name of the website is generally of little interest to the people searching and you may sacrifice a lot of your clickthrough rate for that page.

Correctly formatting images

As mentioned previously, we should consider search crawlers our least able users. Although a search crawler can render and fetch an image, understanding the content of the image for a computer is an extraordinarily complex problem. We need to let the crawler know more about the content of the image and we achieve this through use of the alt tag.

The alt tag is used by user agents that can’t display images. It should be concise and describe the content of the image. If you have a photo of an oak tree in a park you might give it an alt tag of 'Oak tree in Richmond Park'. Descriptively naming the image file also can have a positive effect, for example 'oak-tree.jpg' would be better than 'myphoto.jpg'. Not only does the search engine now have a much better grasp of the content of the image, it also has a better idea about the content of your website in general. A well formatted image optimised for accessibility may look as follows:

<img src="images/oak-tree.jpg" alt="Oak Tree in Richmond Park" />This is far more accessible and gives more clues to search crawlers than:

<img src="images/dcm0000013.jpg" />Formatting links properly

If you are linking to a page on your website that goes into great depth about oak tree leaves, the worst way this could be linked to on your page is:

If you want to read about Oak Leaves, <a href="leaves.html">click here</a>.This is not only awful from a usability point of view, but also from an SEO point of view. One highly important part of a link's anatomy is the text within the link – this provides a very strong clue to search crawlers about what the page being linked to is about. If you’ve ever heard of ‘Google Bombing’ this is the underlying reason why it works.

A better way to present the link would be as follows:

Read more about <a href="oak-leaves.html">Oak Leaves</a>.This principle extends to hyperlinks on other websites that link back to you. A related website linking to your website in this way:

Want to learn more about Oak Trees? <a href="http://www.oak-trees.com">Visit this site!</a>Is inferior to being linked to this way:

Learn more about <a href="http://www.oak-trees.com">Oak Trees</a>.In the second example search crawlers are being given a big hint to the content of the website being linked to.

You obviously have limited control over how third party websites format the links. However, it’s important to keep in mind as opportunities for suggestions to third party website owners may present themselves in the future.

Meta tags

It’s common knowledge now that the meta Keywords tag should be considered redundant for SEO purposes. Not only is it a waste of markup, it also gives your competitors strong clues about the terms you are targeting!

There are, however, other very useful meta tags which should be utilised on your website.

- Description meta tag: The description meta tag should be a concise overview of the page. It is often displayed in search engine results, so not only is it important to design it as concisely and as descriptively as possible but also think about how appealing it is for a potential visitor to click on. Descriptions shouldn’t extend more than around 160 characters in length.

- Canonical meta tag: The canonical meta tag is an important one that is often overlooked by web developers. To understand why we need the canonical meta tag we have to understand that search engines can treat pages with slight variations in their URLs as separate and distinct pages. As an example, take these two URLs: http://www.example.com/shop/widget.html http://www.example.com/shop/widget.html?visitID=123 They could be treated as distinct URLs even though they display exactly the same content. This could impact on your site negatively, because you ideally want the search engines to only index the first URL and ignore the second. The canonical meta tag solves this issue:<link href="http://www.example.com/shop/widget.html" rel="canonical" /> Placing the canonical meta tag on the widget.html page lets crawlers know your preferred version of the page.

Sitemaps

Sitemaps should be kept up to date and contain every URL you want to be indexed. You might not realise that some pages on your site are buried deeply in your website and hard to access – a search crawler may not explore that deeply. By listing every page on your site in a sitemap you’ve made your site far more accessible to the search crawlers and you can be sure that the search engines will know about all your content.

Sitemaps have evolved and now are commonly used with XML. The XML schema for sitemaps comes with a few options such as the last modification date, how frequently this page is changed and it’s relative priority.

If you are not completely confident in your usage of the more advanced attributes such as the change frequency and priority, it’s best to ignore them. The search engines are going to be intelligent enough to determine these values for themselves more often than not. The absolutely essential thing you need your sitemap to contain is a full directory of URLs on your website you wish to be indexed.

Common SEO mistakes

Paying for link building

Link building is the process of increasing the number of links on other sites to your website. One way this is gamed is to manufacture links to your website en masse in an effort to feign authority. It’s a quantity over quality methodology that may have been successful in Google’s earlier days, but as Google’s algorithms have intelligently evolved this methodology is offering increasingly diminishing returns.

What happens when you pay an ‘SEO firm’ to link build for you? More often than not, it will spam other websites on your behalf with automated tools. It’s a selfish tactic – you’re receiving negligible (if any at all) benefits at the expense of honest webmasters' time – they have to clean it up off their sites.

Earlier this year Google released an algorithmic change named Penguin. The Penguin update’s intention is to devalue websites that engage in underhand tactics such as spam link building. A highly unethical tactic in the SEO world called ‘negative SEO’ has since come into the limelight since the Penguin update. Negative SEO is the act of engaging in black-hat SEO tactics on behalf of your competitors with the objective of getting them penalised.

It’s unlikely your startup will be negatively SEO’d: it takes a concerted effort, money – and a distinct lack of ethics. However, if you’re paying for sustained link building campaign for your startup you’re running the risk of shooting yourself in the foot and being devalued by Google’s algorithms. Repairing the damage of a bad quality link building campaign can be extremely costly, difficult and time consuming.

Paying for ‘Link Building Packages’ should be a huge turn off. There are negligible (if any at all) benefits, and a huge amount of downside. It’s often the hallmark of an unethical and poor quality SEO firm.

Keyword density

Reading up on SEO you probably have come across words such as ‘keyword density’ referring to the percentage of words in a particular body of text that are relevant to the search terms you are interested in. The theory is that if you hit a specific density of keywords in a body of text you will be ranked higher in search results.

Keyword density is often presented as an oversimplification of numerical statistic called tf*idf. tf*idf reflects the importance of a word in a body of text or collection of bodies of text in a far more accurate way than rudimentary keyword density measurements. Also, tf*idf as described mathematically probably isn’t the end of the story. It’s likely search engines have modified this statistic and weighted it differently in different cases to improve quality of returned results.

What conclusion should we draw from this as a new startup? You should probably ignore it all. When you’re writing content such as a new blog post you need to remind yourself of your objectives – you’re trying to write content that people will want to read. Text tuned to specific keyword densities has a potential large downside which is that the text becomes increasingly obscure. A well written body of text will likely attract more good quality links and social shares, which in turn will increase the value of your website in search engine’s eyes. Don’t worry about keyword densities: worry about the quality of your writing.

Ignoring clickability of search engine results

When designing your page's title and meta descriptions, it’s easy to over-engineer them specifically for the search engines. Remembering your actual end objective is to get real people to click on search results is important. If you’re ranked on the first page of a search result, the text extracted by the search engine in the result needs to be concise, descriptive and appealing for the visitor to click on. When designing these aspects of a page, which are likely to be relayed into the search engine result, it’s important to strike a balance between the benefit of potential increased rankings and the user friendliness and clickability of that content.

Play to the rules

Good quality SEO tactics that play by the rules (known as white-hat) are the safest bet for the long term. It may be tempting at times to engage in black-hat tactics – certainly the arguments presented by the black-hats can be seductive - yet you are risking alienating what inevitably is going to be one of your major and free sources of traffic. Risking this channel of potential customers is not a price a startup should be willing to pay.

Playing by the rules is a sustainable long term strategy. And this should align itself perfectly with your ambitions as a new startup.

The Creative Bloq team is made up of a group of art and design enthusiasts, and has changed and evolved since Creative Bloq began back in 2012. The current website team consists of eight full-time members of staff: Editor Georgia Coggan, Deputy Editor Rosie Hilder, Ecommerce Editor Beren Neale, Senior News Editor Daniel Piper, Editor, Digital Art and 3D Ian Dean, Tech Reviews Editor Erlingur Einarsson, Ecommerce Writer Beth Nicholls and Staff Writer Natalie Fear, as well as a roster of freelancers from around the world. The ImagineFX magazine team also pitch in, ensuring that content from leading digital art publication ImagineFX is represented on Creative Bloq.