Epic Games just created a scarily realistic human on an iPhone

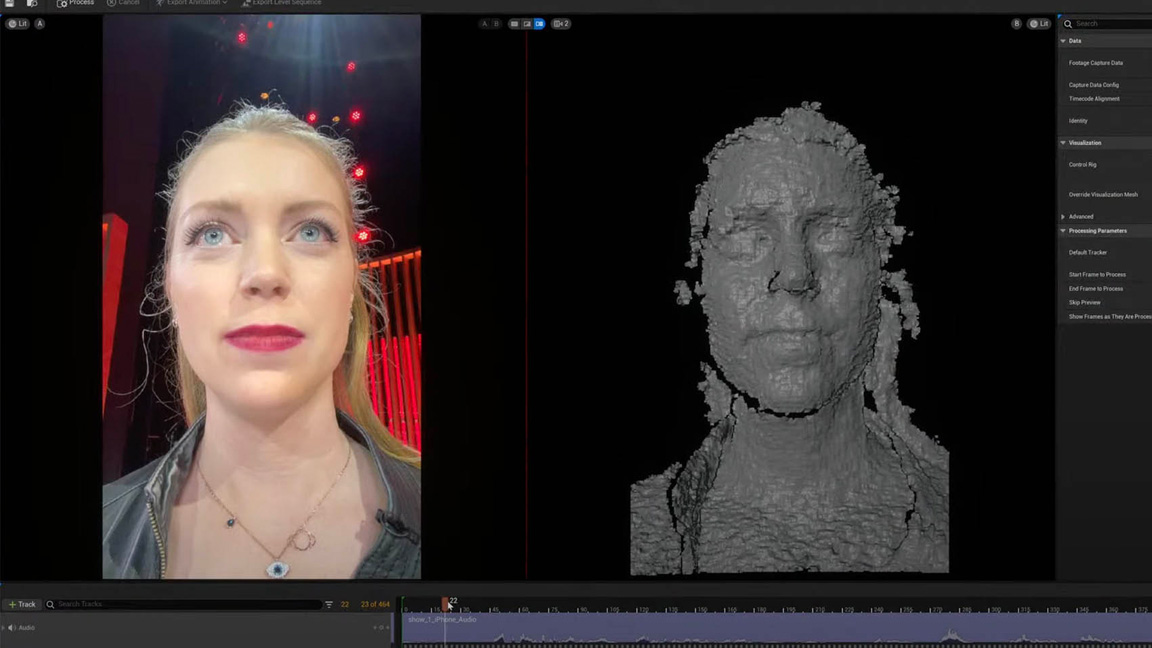

When Epic Games took the stage at GDC this week everyone was blown away by the Unreal Engine 5.2 tech demo that showcased how easy it is to create incredible photo real characters, or MetaHumans. But few realised everything being shown was created in real time… on an iPhone.

Earlier in the week game developer Ninja Theory showed off the latest footage from the highly anticipated Senua's Sage: Hellblade 2, in which actress Melina Juergens is seen running through a range of highly realistic emotions and was created using the same MetaHuman Animator. The game is coming exclusively to Xbox Series X, but the tech used will be open to every game dev. (If you need to learn the basics, read our Unreal Engine 5 tutorial on character design.)

Once the buzz around the new Hellblade 2 tech demo had died down (see the full video below) Epic Games and actress Juergens were on hand at the Unreal 5.2 keynote to run through the new MetaHuman Animator tools, and everything demoed was done live using just an iPhone (and a suped-up PC workstation).

Juergens took to the stage and an iPhone on a tripod was placed in front of her. She simply went through a few facial expressions. There was no heavy stereo helmet-mounted camera rig or lengthy setup process. In a couple of minutes the footage was taken and the expressive performance captured and placed onto a digital likeness of Juergens.

What impresses me is the speed and versatility of Epic Games' new animation tools. The demo showed how Unreal Engine 5.2 can create a realistically animated model from just three shots of the Juergens, even the seasoned actress was taken aback. This is the actresses' MetaHuman Identity (DNA) and can be used on any project she performs in; it can also be edited and built upon for other projects.

To show this in action same animation just captured using an iPhone was then placed onto a number of alternative characters, revealing how the same footage can be reused across all manner of models, from other realistic humans to a stylised cartoon boy. And again, let's remember this was captured on an iPhone, in minutes, with little setup.

Unreal Engine 5.2's new MetaHuman Animator is the kind of artist-friendly facial animation software like I've never seen before. The process is so simple the demo had Juergens alter-ego up and speaking in minutes and Epic Games is keen to stress this is for everyone, from those with MoCap experience to small indie teams and hobbyists. MetaHuman Animator gives the same facial animation tools available to high-end video game and film VFX teams to everyone, and that's impressive.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

If you're a team that makes use of MoCap then MetaHuman Animator can also be tied into your existing MoCap suits such as Xsens for body capture, it offers a timecode to align with your body MoCap and audio for a complete character animation process.

It's hard not to be impressed by the new MetaHuman Animator. The demo created by Ninja Theory is frighteningly realistic and shows Juergens in close-up performing a range of expressions, her lips trembling and the actresses' eyes wince and small muscles I've never seen before in a game character emote with such realism I had to second take what I'm seeing.

If this is the future of game development, and I'm reminding myself this was captured on an iPhone in minutes, though the final Ninja Theory demo would have been worked on for a lot longer, we're in for an emotional time when creative teams and artists get their hands on this technology.

There's no better time to start animating and or get into game development, either. Take a look at our guide to the best laptops for animation and read up Unreal Engine 5. The MetaHuman Plugin for Unreal Engine is free to download and works with iPhone 11 and more recent releases. Visit the Unreal Engine website for more details and tutorials.

Read more:

- Unity vs Unreal Engine: which game engine is for you?

- Is it time you learned Unreal Engine?

- Making it in the video game industry: top tips

Ian Dean is Editor, Digital Arts & 3D at Creative Bloq, and the former editor of many leading magazines. These titles included ImagineFX, 3D World and video game titles Play and Official PlayStation Magazine. Ian launched Xbox magazine X360 and edited PlayStation World. For Creative Bloq, Ian combines his experiences to bring the latest news on digital art, VFX and video games and tech, and in his spare time he doodles in Procreate, ArtRage, and Rebelle while finding time to play Xbox and PS5.