How facial performance capture has evolved from Avengers Infinity War to The Fantastic Four First Steps

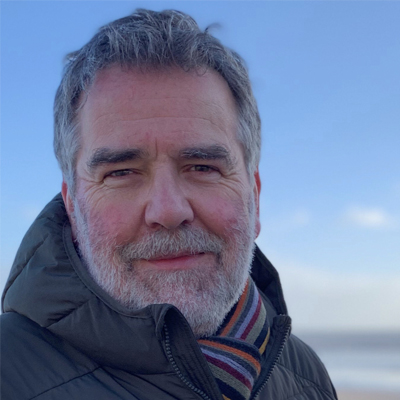

Visual Effects Supervisor Phil Cramer discusses how advances in Digital Domain's Masquerade are shaping VFX.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Established in the early 1990s, Digital Domain has sustained its profile as a major presence in visual effects, contributing to hugely popular movies that have included the iconic Avengers: Infinity War and Avengers: Endgame.

A distinctive aspect of its work over the past decade and a half has been in facial performance-capture and its realisation on screen through animation and visual effects (for more inspiration, see this year's VES Awards winners and our pick of the best movie CGI). As part of our new Film in Focus series, we caught up with the studio's VFX supervisor Phil Cramer to learn about how the tech has evolved.

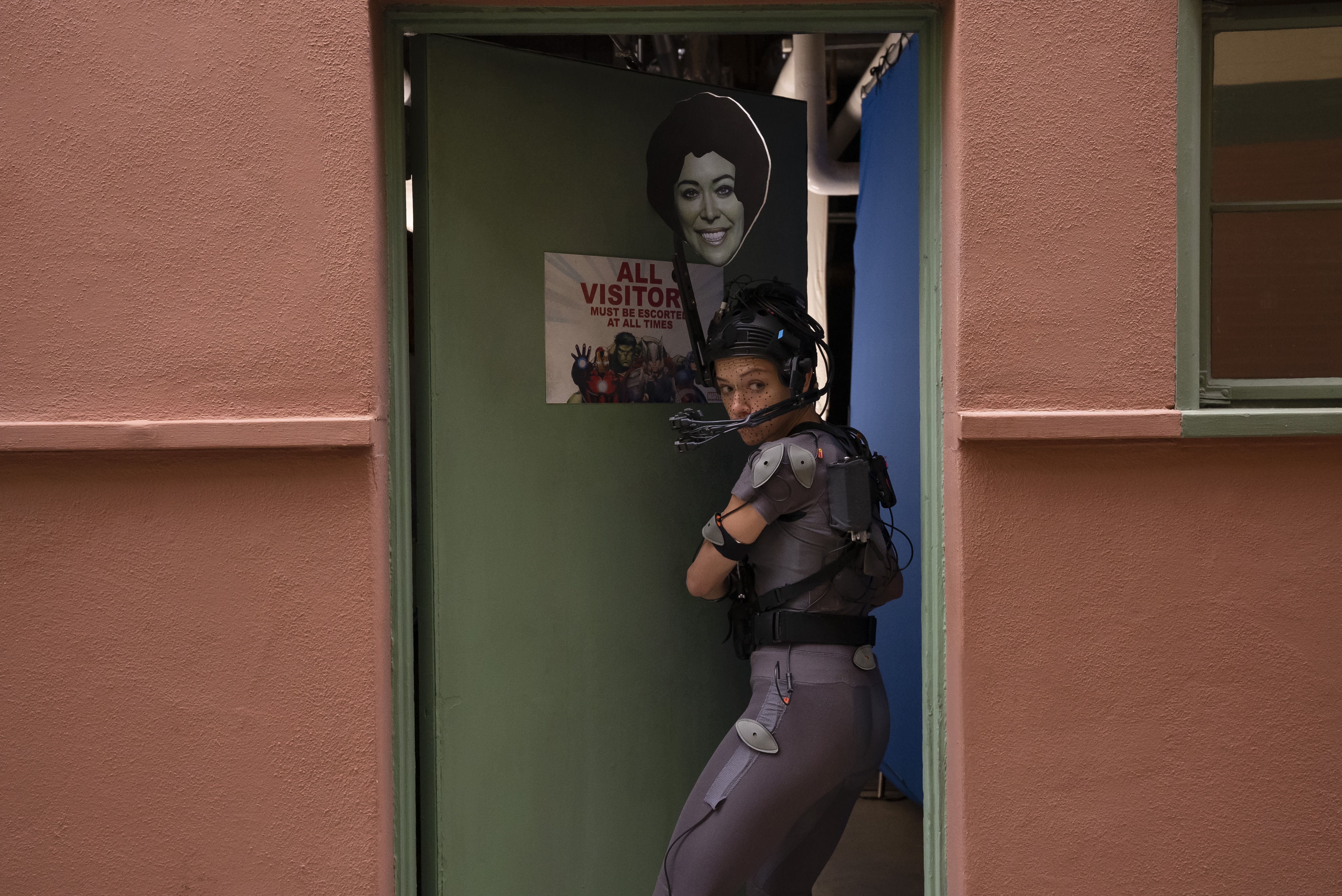

Part of pursuing that edge has been the development of Digital Domain’s proprietary software Masquerade. This new system uses images of the actor’s face from a helmet-mounted camera and automatically transforms these into high-resolution per frame moving scans of the actor’s face.

Article continues belowEarlier versions of Masquerade were used to translate all of Josh Brolin’s performances into Thanos’s facial motion in Avengers: Infinity War and Avengers: Endgame.

“With digital characters, it’s not just about the animation,” Phil notes. “Every little aspect contributes the believability. If you think of Thanos or She-Hulk, the technology keeps building and evolving. Digital Domain has always been at the forefront of facial technology.

“Each of these Masquerade releases, you know, builds on the others, uses the tech we all worked, and then we refine it. What we're doing now is building on fifteen years of facial development.”

Masquerade 2.0, showcased in Digital Domain’s work for She-Hulk: Attorney at Law, gave artists the ability to continually edit and alter the facial capture results, while machine-learning smoothed out the rough edges in a matter of days and weeks rather than months.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

“For me, what I focus on the most is ensuring that the actor’s face is represented in the motion, and that the nuances and actual motion of the performance comes through.” Phil explains.

“This gives us a baseline to work from. Previously, in our facial pipeline, we would apply tracking markers on the actors’ faces, and track each individual marker by hand to record the data. We would then interpret this back onto the animation rif. However, it was a very convoluted process that took up to a week per shot.

This was during the very early stages. Our goal was to extract more data utilizing these very high-res images of the actors’ faces, and we began to think, ‘We have these 60 dots that don’t represent the face enough. What else can we get?,’ so we went back to the drawing board.

We wanted to get a better understanding of the skin motion, so we introduce this additional layer and called it, the delta: it would carry additional high frequency detail from the actor; just the very, very fine detail for the animators to use. They couldn't fully control it, but they could turn it on and off in different areas and control the strength of it. And I think these advancements made Thanos incredibly successful.

That was sort of the rollout of the original Masquerade. We had a much bigger seat in the industry because of Thanos, and everyone was intrigued by what we were doing.

For The Fantastic Four: First Steps, Digital Domain developed a revolutionary new pipeline utilizing the studio's newest proprietary markerless facial capture technology Masquerade3, delivering 300 intricate shots featuring The Thing. Notably, the Masquerade3 pipeline was shared across four other vendors on the film, an uncommon achievement in the VFX industry.

Digital Domain provided all post-viz assembly scenes that relied on Xsens and Masquerade3 data cut to specific timecode ranges that were provided by the client. The Previs vendor, The Third Floor, received raw dumps of the Masquerade3 data on the character rigs.

This unified approach gave the other vendors a starting point for the facial animation, ensuring consistency in character performance and quality across every shot, regardless of the studio executing it.

“In our current form with Masquerade3,” Phil goes on to explain, “the exciting thing is that there is no more need for markers, and so it's much quicker for the actors. It was much easier on our end, too, because the calibrations were simplified.”

Addressing proprietary software development, Phil notes that “We have a few main areas where we're investing a lot of energy. We’re known for faces, and so we want to be able to always have the latest solutions for that. Proprietary development is not tied to a particular project normally. It is an ongoing development where we then find projects to apply it to, or test the newer version on.

“The next step for Masquerade will be based on what we feel would be exciting and have an edge (which I cannot go into too much detail here). Most importantly, at Digital Domain, we focus on having an artistic approach to technology.”

James has written about movies and popular culture since 2001. His books include Blue Eyed Cool: Paul Newman, Bodies in Heroic Motion: The Cinema of James Cameron, The Virgin Film Guide: Animated Films and The Year of the Geek. In addition to his books, James has written for magazines including 3D World and Imagine FX.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.