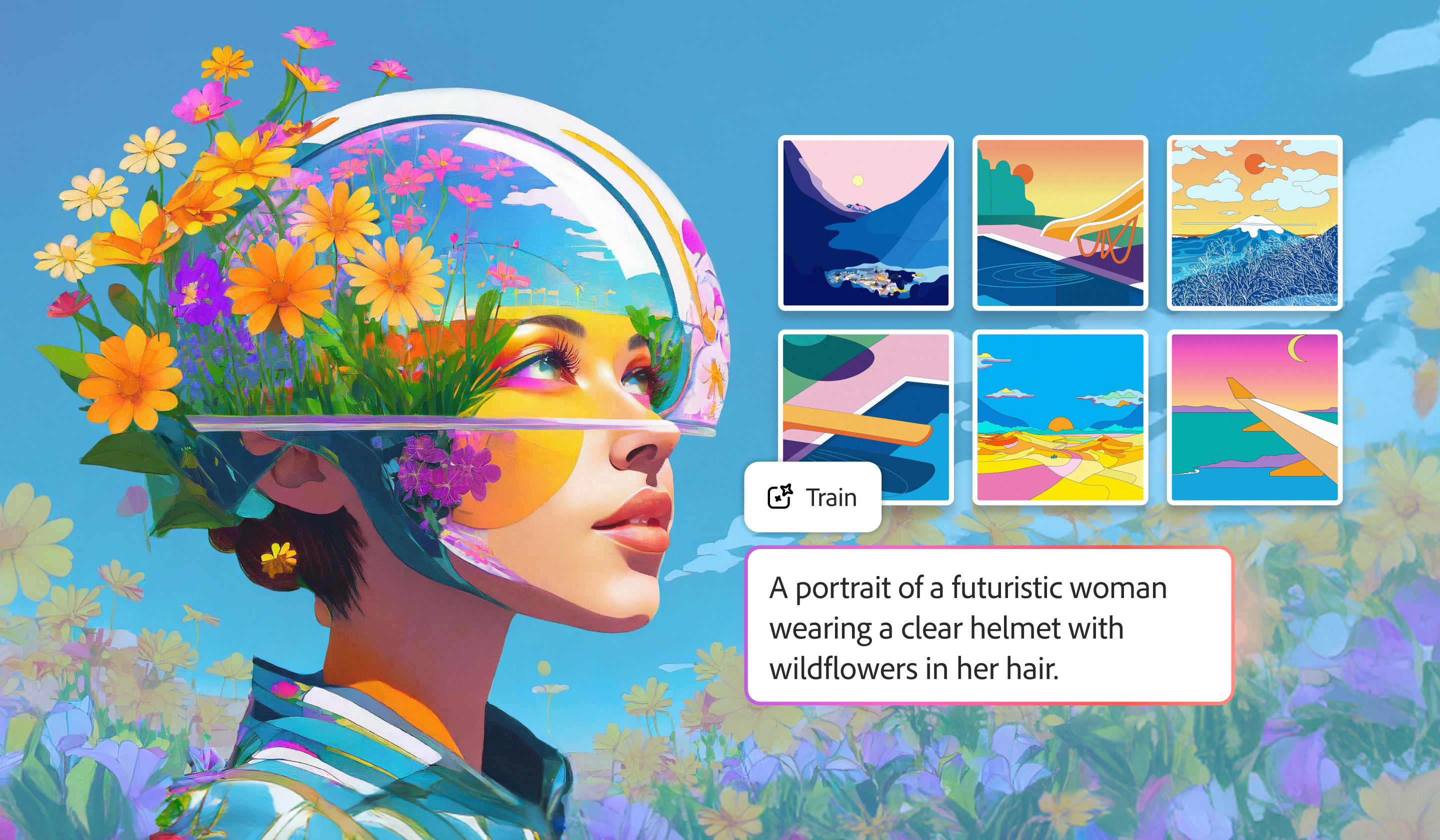

Adobe Firefly's custom AI models "preserve the unique soul of your work"

Deepa Subramaniam on what makes Firefly's latest AI advance so exciting for creatives.

Adobe recently announced that its custom models for Firefly are now available in public beta. These enable users to generate images and content that's specifically designed to work with a brand – think consistent colour palettes, character designs and icons.

We think this could be a game-changer for AI – it could be the thing that finally tames this technology and enables creators to use it consistently to create their own aesthetic or brand, without spending hours on tiny tweaks.

To find out more, we caught up with Deepa Subramaniam, vice president of product marketing for creative professionals at Adobe.

Why should creatives be excited about Firefly Custom Models?

Deepa Subramaniam: Firefly custom models solves one of the biggest challenges in generative AI: consistency. Your style is yours, and yours alone – but until now, maintaining it across AI outputs has been time-consuming and difficult for creative pros and their teams.

Now in broad public beta, Firefly custom models let you turn your creative style into a reusable model trained on your own images, and optimised for ideation in character, illustration, and photographic styles. The result is a faster, more reliable creative partner that preserves the unique 'soul' of your work while enabling faster iteration and exploration. And your models are private by default, so the content you create with them remains entirely yours.

You can then pair your custom model with 30+ other top industry models – including options like Google’s Nano Banana 2, Veo 3.1, Runway Gen-4.5, and Adobe’s Image Model 5 – and use Firefly’s editing tools, like like Quick Cut, which turns raw footage into a structured first cut in minutes, or expanded image editing capabilities, to take ideas from concept to production-ready in minutes.

How have custom models already transformed workflows?

DS: We first introduced this capability for enterprise customers, where teams are managing large volumes of content and need to move quickly without losing what makes their brand distinct. Starting there has been incredibly valuable. It has given us a clear view into how teams work, what they need to scale, and how to build this in a way that fits into individual creative workflows.

Generative AI is now core to creative work: 86 per cent of creators are using creative generative AI, and 76 per cent say it’s helped grow their business and personal brand. As adoption grows, the challenge shifts to scaling output and consistency without sacrificing quality, and that’s where custom models are making a real difference.

They can help remove much of the time-consuming, repetitive work – such as fine-tuning things like lighting, colour palettes or line weights across every asset – by capturing those details upfront, so creatives can focus more on creative decisions, craft and direction.

For many creative teams, that’s already translating into real, tangible impact. Amazon Fresh reduced turnaround time by 93 per cent across hundreds of images. Newell Brands is creating campaign visuals five times faster while maintaining brand precision, and IPG Health built an entirely new brand identity in 10 days. Others are using custom models to accelerate ideation from weeks to days, or to deliver thousands of personalised assets at scale.

Firefly custom models give creatives a consistent foundation to build from, so they can move faster, stay in control, without starting from scratch.

Are there particular industries that would benefit from custom models?

DS: Organisations in marketing-heavy industries, like retail, consumer goods, financial services, healthcare and education, have already seen strong impact from custom models because they’re producing large volumes of content and need to keep it consistent across markets, channels and campaigns.

What these teams have in common is a constant need to scale assets, variations and personalisation, without losing the integrity of their brand. Custom models help by capturing that visual identity upfront, so teams can generate and adapt content much more efficiently while staying on-brand.

We’re seeing a similar pattern in media and entertainment with Firefly Foundry, where studios and IP owners are training models on their own proprietary content to extend creative worlds, move faster across production workflows and scale storytelling across formats. In both cases, the underlying need is the same: how do you produce more, with greater speed and control, while preserving what makes the work distinctive.

From brands to individual artists, the goal is the same: scaling creativity without losing what makes it yours.

With the further rollout of Project Moonlight, how does Adobe see chat interfaces featuring in creatives’ workflows over the coming years?

DS: We’re seeing conversational interfaces become a more natural way for creatives to work, where you can simply describe what you want and have AI help bring it to life. With Project Moonlight, we see chat evolving into a core part of the creative workflow, not as a separate tool, but as something embedded directly within the apps people already use.

What’s important is that this isn’t about replacing hands-on creation completely. It’s about combining the ease of conversation with control. You can use natural language to explore ideas, kickstart a concept, learn how to do something, or handle time-consuming tasks, then move seamlessly into precise editing when you want to refine the work. That hybrid model – conversation plus craft – is really where we see the most value.

Over time, these assistants will become more context-aware and connected across the entire creative process. With Project Moonlight, that means understanding your style, your assets and your goals, and helping coordinate work across multiple apps – from ideation through to final output. The role of chat becomes more about an ongoing collaboration, where the system helps carry context forward and keeps you moving without breaking your flow.

Ultimately, the goal is to make complex creative workflows feel intuitive, so creatives can focus more on direction, while the system supports execution alongside them.

This build on Adobe’s broader vision for bringing conversational AI to people everywhere you work and create – in our apps, including AI Assistants in Acrobat, Adobe Express and Adobe Photoshop, along with third-party chat platforms like OpenAI’s ChatGPT and Microsoft 365 Copilot.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

Rosie Hilder is Creative Bloq's Deputy Editor. After beginning her career in journalism in Argentina – where she worked as Deputy Editor of Time Out Buenos Aires – she moved back to the UK and joined Future Plc in 2016. Since then, she's worked as Operations Editor on magazines including Computer Arts, 3D World and Paint & Draw and Mac|Life. In 2018, she joined Creative Bloq, where she now assists with the daily management of the site, including growing the site's reach, getting involved in events, such as judging the Brand Impact Awards, and helping make sure our content serves the reader as best it can.

- Daniel JohnDesign Editor

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.