How we taught AI to understand light

It was transformational, and a major win for artists and filmmakers.

Most AI lighting tools work by imitation. They’ve seen enough images to guess what lighting should look like, and most of the time, that’s good enough. Until you push it.

Relighting is usually where things fall apart (even in the best video editing software). Drop a subject into a new environment, push contrast, or try to match a specific mood, and the cracks show up fast: skin goes flat, highlights feel pasted on, and hair stops behaving like hair. This is when it becomes obvious that imitation isn’t the same thing as understanding.

If you want to relight a human convincingly, you have to deal with how light actually behaves. That means how it wraps around a face, how it scatters through flyaway hair. and how different materials all respond differently to the same light source.

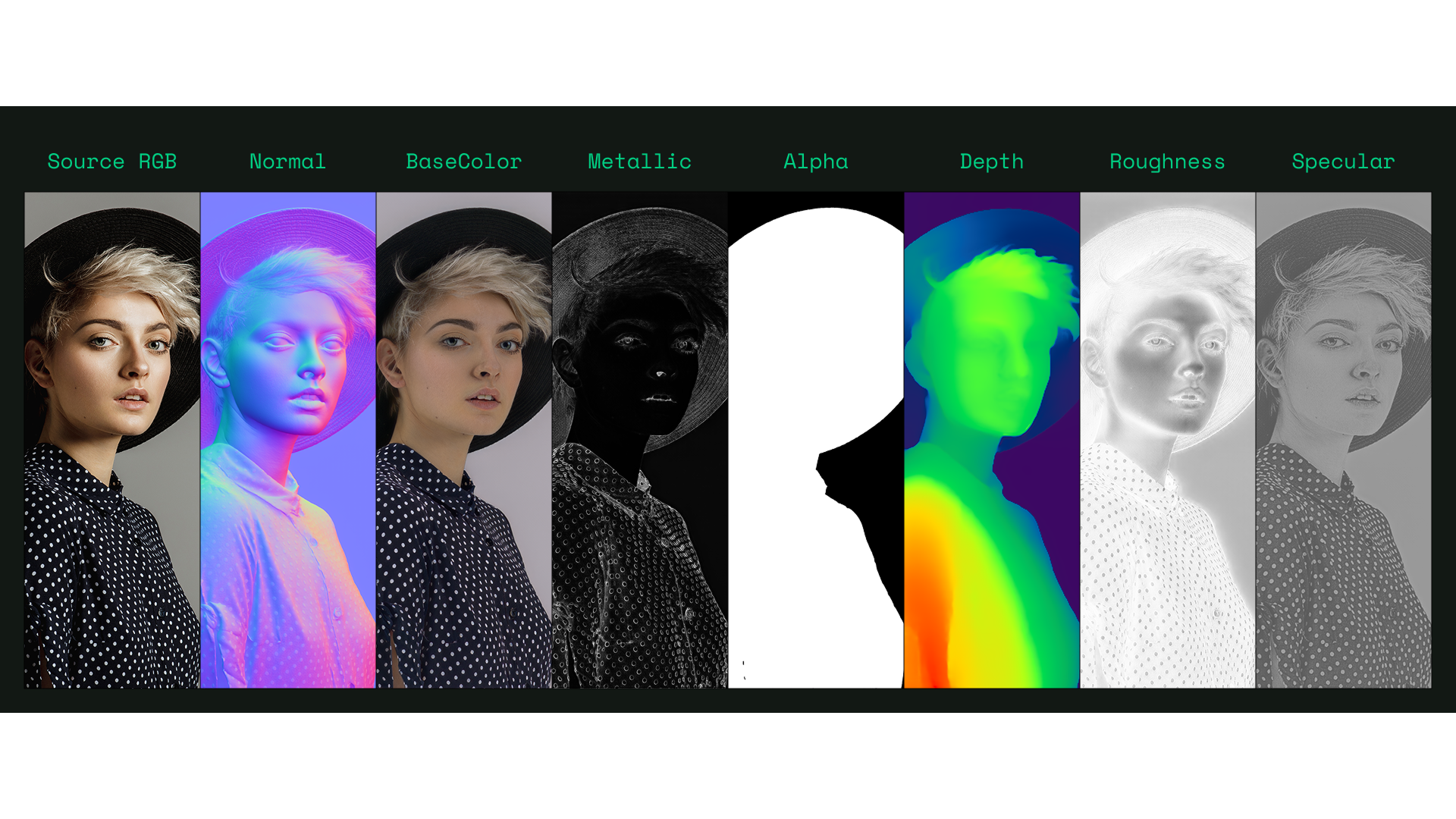

Relighting goes beyond tweaking brightness or colour temperature. Faces and bodies are complex combinations of geometry, material, and reflection. While skin softens highlights in a way fabric never will, hair introduces unpredictable scattering, and clothing ranges from matte to highly reflective. When AI treats all of this as a single visual surface, the result might look fine at first glance, but it won’t hold up once you start changing the lighting with intent.

This is the problem many creators run into with AI tools today. If the system doesn’t understand why light behaves the way it does, you lose control, and lighting becomes fragile: small adjustments introduce artefacts and composites feel disconnected from their environments.

Computer graphics has addressed this problem in different ways for decades. Instead of guessing appearances, physically based rendering describes how light reflects, diffuses, and scatters across surfaces. Change the light, and the result changes in a predictable way.

That way of thinking turns out to matter just as much for AI.

Sign up to Creative Bloq's daily newsletter, which brings you the latest news and inspiration from the worlds of art, design and technology.

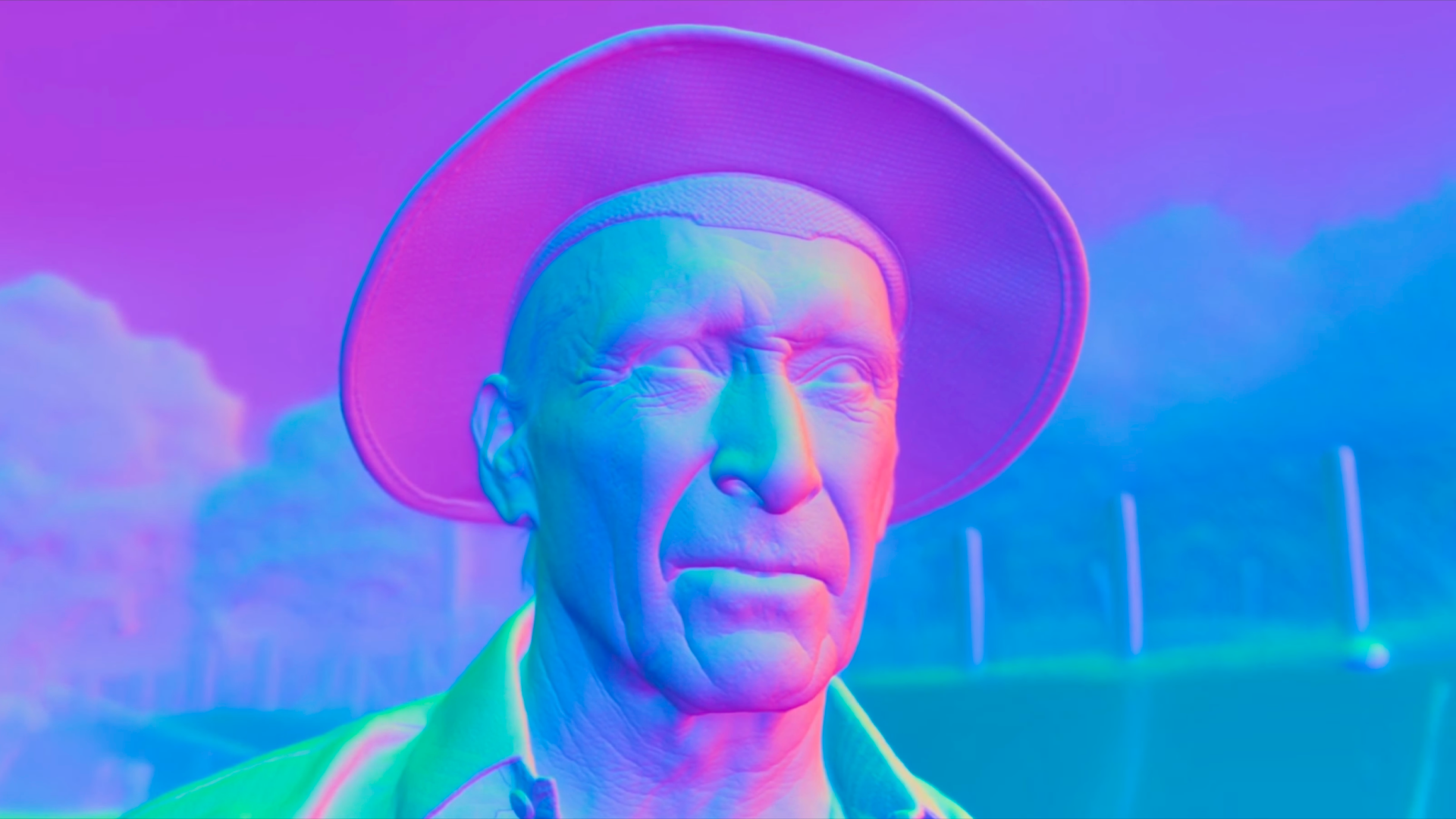

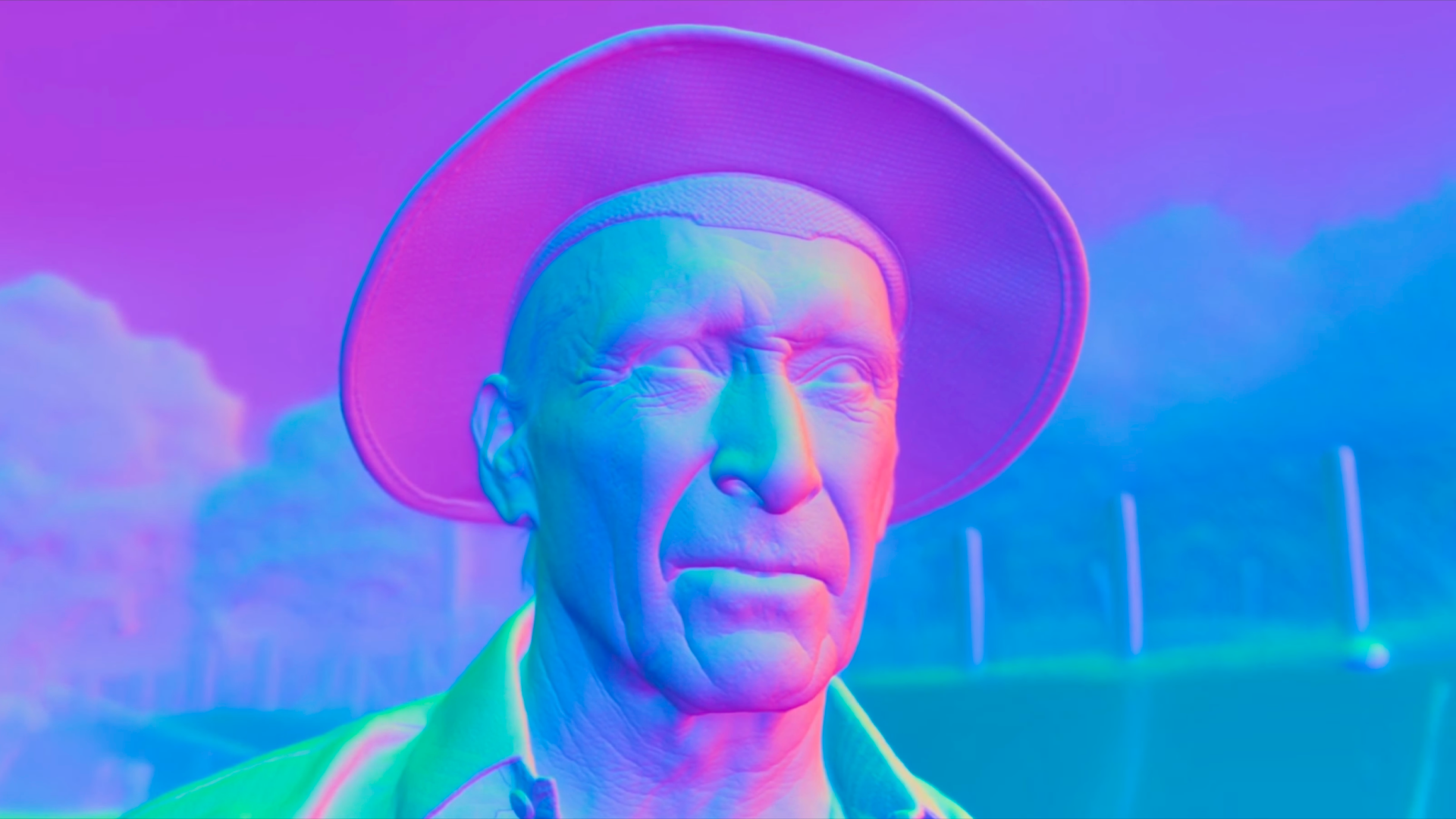

Rather than training a system to guess what a relit image should look like, you can ask it to reason about light. A portrait can be broken down into physical components – such as surface orientation and material response – and then relit based on how those components would respond to new illumination.

The difference shows up immediately when you push the lighting. Highlights sit where you expect them to. Skin stays soft without turning plastic, while hair reacts naturally instead of collapsing into noise. The lighting feels connected to the space rather than layered on top of the image.

Once we took this approach, something else changed too: lighting stopped fighting the subject.

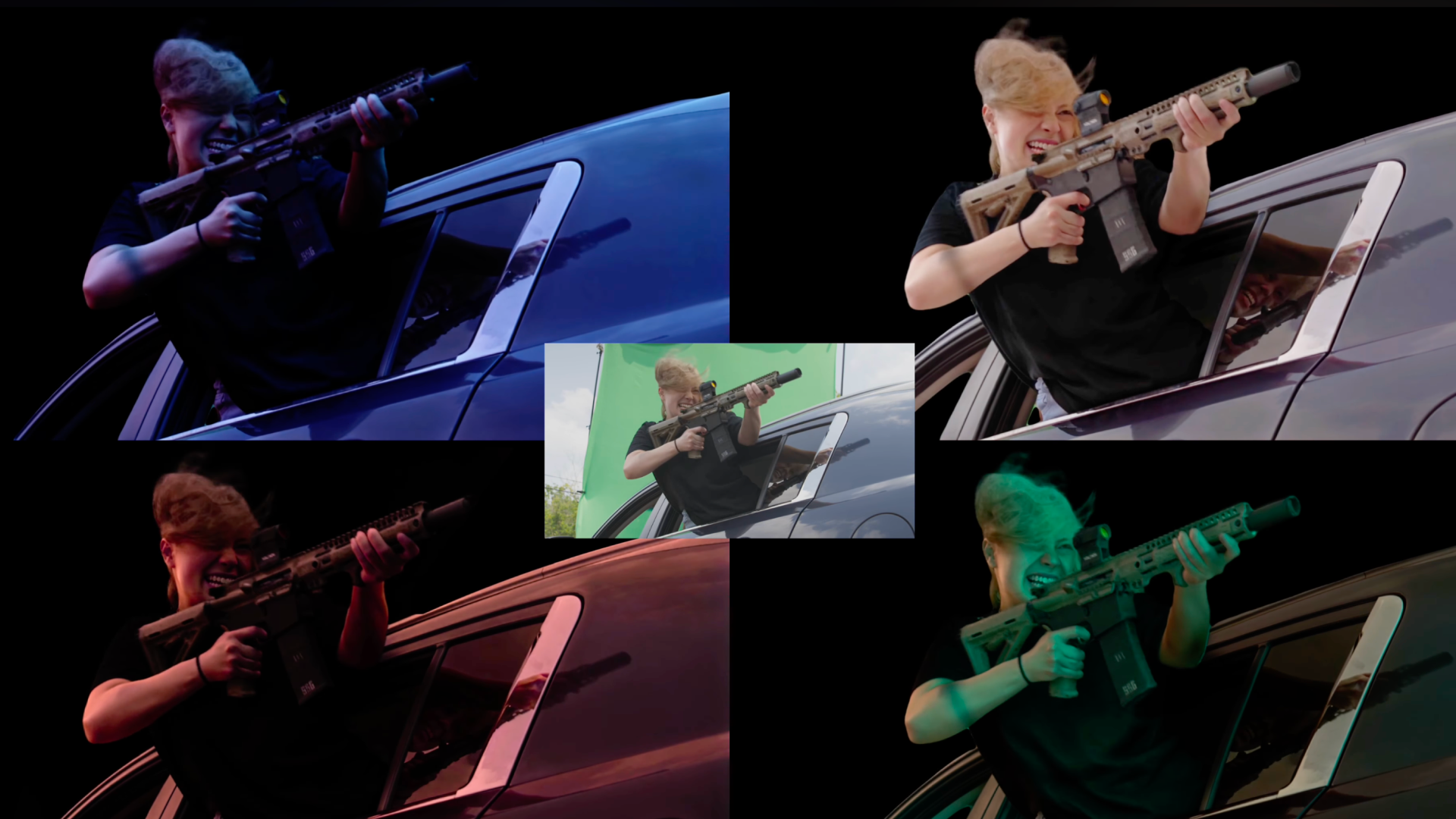

For artists and filmmakers, that’s the real win. As lighting behaves predictably, creative decisions become flexible. The door is now open to exploring moods after the shoot instead of locking everything on set, or to integrating live-action performances into new environments without spending hours fixing inconsistencies.

AI, instead of being creative, needs to be reliable, supporting the actual humans who use it to make art. AI that understands how light works enhances storytelling and supports artists shaping moods, atmospheres, and stories. That’s where physics and creativity meet, not as opposites, but as collaborators.

Hoon Kim is the Founder of Beeble, an AI virtual production platform enabling physically accurate relighting and photorealistic compositing in post. With a background in deep learning and computer vision, he focuses on bridging real-world physics and creative workflows for filmmakers.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.